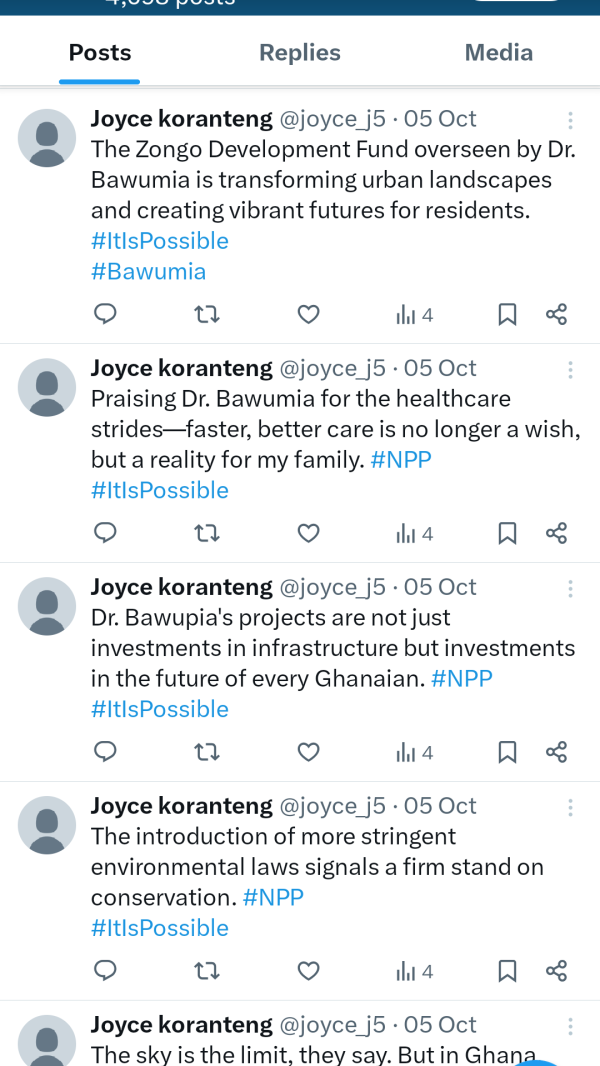

Description: A network of 171 bot accounts on X are alleged to have used ChatGPT to generate political content supporting Ghana’s New Patriotic Party (NPP) and its presidential candidate, Mahamudu Bawumia, ahead of the December 2024 election. The AI-generated posts reportedly praised Bawumia while spreading disinformation targeting the opposition candidate, John Mahama, of the National Democratic Congress (NDC).

Editor Notes: Reconstructing the timeline of events: (1) February 2024: The network of 171 bot accounts is reported to have become active by regularly generating and disseminating political content favoring Ghana’s New Patriotic Party (NPP) and its presidential candidate, Mahamudu Bawumia. The posts reportedly used hashtags like #Bawumia2024 and #ItIsPossible to amplify pro-NPP messaging while disparaging opposition candidate John Mahama with hashtags like #Mahamaisaliar and #DrunkmaniMahama. (2) October 2024: OpenAI reported disrupting over 20 political influence networks worldwide that used ChatGPT to generate political content, though this network targeting Ghana’s election is reportedly not specifically mentioned in its statement. (3) November 12, 2024: NewsGuard releases research findings identifying the 171 bot accounts as likely using ChatGPT to generate political posts. According to NewsGuard, the bots were found to operate with regimented patterns, posting 10 or more times per day during Ghanaian business hours. (4) December 2024: As the presidential election approached on December 7, the bot accounts continued to be active. NewsGuard shared its findings with X and OpenAI, but only four of the 171 accounts were reported to have faced action, with two suspended and two restricted. (8) The Wikipedia article on this election is located at https://en.wikipedia.org/wiki/2024_Ghanaian_general_election.

Entities

View all entitiesAlleged: OpenAI developed an AI system deployed by Pro-New Patriotic Party bot network, which harmed John Mahama , National Democratic Congress , Ghanaian electorate , Democracy and Electoral integrity.

Alleged implicated AI systems: ChatGPT and X (Twitter)

Incident Stats

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

4.1. Disinformation, surveillance, and influence at scale

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Malicious Actors & Misuse

Entity

Which, if any, entity is presented as the main cause of the risk

Human

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Intentional

Incident Reports

Reports Timeline

Loading...

As Ghana approaches its presidential election on December 7, researchers have uncovered a network of 171 bot accounts on X that use ChatGPT to write posts favorable to the incumbent political party, the New Patriotic Party (NPP).

An AI-au…

Variants

A "variant" is an AI incident similar to a known case—it has the same causes, harms, and AI system. Instead of listing it separately, we group it under the first reported incident. Unlike other incidents, variants do not need to have been reported outside the AIID. Learn more from the research paper.

Seen something similar?

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Similar Incidents

Did our AI mess up? Flag the unrelated incidents