Description: Meta's AI image generator is alleged to produce inaccurate and biased images, consistently failing to depict interracial relationships involving Asian individuals and Caucasian or Black individuals. Instead, it generates images featuring two Asian people or stereotypes, erasing the diversity and representation of Asian people.

Tools

New ReportNew ResponseDiscoverView History

The OECD AI Incidents and Hazards Monitor (AIM) automatically collects and classifies AI-related incidents and hazards in real time from reputable news sources worldwide.

Entities

View all entitiesAlleged: Meta developed and deployed an AI system, which harmed Asian People , Interracial couples and General public.

Incident Stats

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

1.1. Unfair discrimination and misrepresentation

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Discrimination and Toxicity

Entity

Which, if any, entity is presented as the main cause of the risk

AI

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Unintentional

Incident Reports

Reports Timeline

Loading...

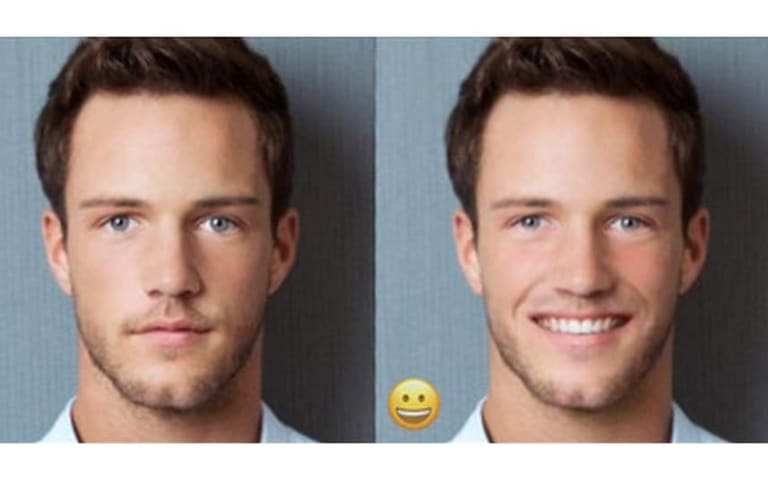

Have you ever seen an Asian person with a white person, whether that’s a mixed-race couple or two friends of different races? Seems pretty common to me — I have lots of white friends!

To Meta’s AI-powered image generator, apparently this is…

Loading...

Mia Sato post-incident response

Yesterday, I reported that Meta's AI image generator was making everyone Asian, even when the text prompt specified another race. Today, I briefly had the opposite problem: I was unable to generate any Asian people using the same prompts as…

Variants

A "variant" is an AI incident similar to a known case—it has the same causes, harms, and AI system. Instead of listing it separately, we group it under the first reported incident. Unlike other incidents, variants do not need to have been reported outside the AIID. Learn more from the research paper.

Seen something similar?

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Loading...

Biased Google Image Results

· 18 reports

Loading...

Gender Biases of Google Image Search

· 10 reports

Loading...

FaceApp Racial Filters

· 23 reports

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Loading...

Biased Google Image Results

· 18 reports

Loading...

Gender Biases of Google Image Search

· 10 reports

Loading...

FaceApp Racial Filters

· 23 reports