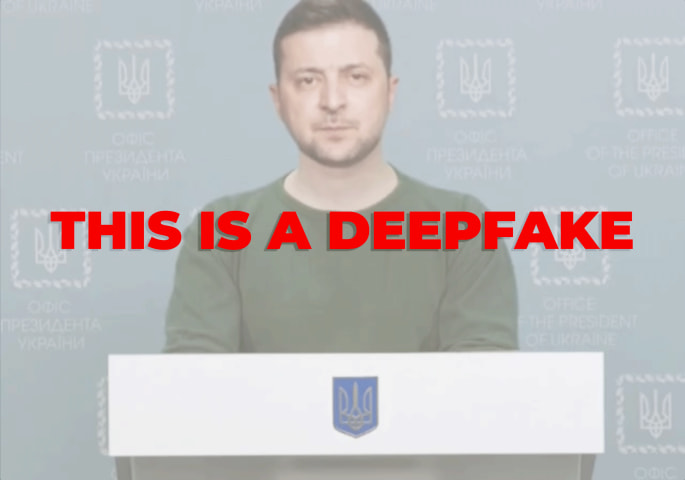

Description: A purportedly AI-generated deepfake video falsely depicting President Biden announcing a national draft to support Ukraine was reportedly shared on social media, causing widespread misinformation. The video reportedly misled the public until debunked by fact-checkers.

Entities

View all entitiesAlleged: Unknown deepfake technology developers and Unknown voice cloning technology developers developed an AI system deployed by Jack Posobiec and @ThePatriotOasis, which harmed Ukraine , Joe Biden , General public , Biden administration , General public of the United States , General public of Ukraine , Relations between the United States and Ukraine , Epistemic integrity , Truth and National security and intelligence stakeholders.

Alleged implicated AI systems: Unknown deepfake technology , Unknown voice cloning technology and Social media platforms

Incident Stats

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

3.1. False or misleading information

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Misinformation

Entity

Which, if any, entity is presented as the main cause of the risk

AI

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Intentional

Incident Reports

Reports Timeline

Loading...

Claim:

In a February 2023 video message, U.S. President Joe Biden invoked the Selective Service Act, which will draft 20-year-olds through lottery to the military as a result of a national security crisis brought on by Russia's invasion of …

Variants

A "variant" is an AI incident similar to a known case—it has the same causes, harms, and AI system. Instead of listing it separately, we group it under the first reported incident. Unlike other incidents, variants do not need to have been reported outside the AIID. Learn more from the research paper.

Seen something similar?

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Similar Incidents

Did our AI mess up? Flag the unrelated incidents