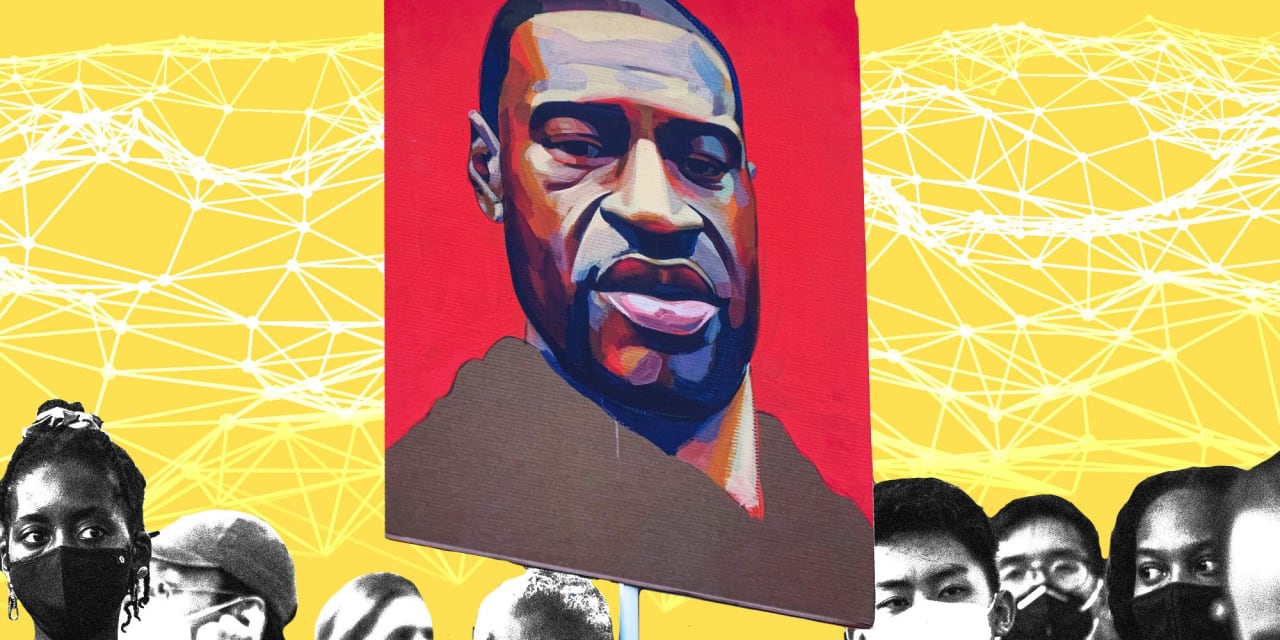

Description: Two chatbots emulating George Floyd were created on Character.ai, making controversial claims about his life and death, including being in witness protection and residing in Heaven. Character.ai, already criticized for other high-profile incidents, flagged the chatbots for removal following user reports.

Entities

View all entitiesAlleged: Character.AI developed an AI system deployed by Character.AI users , @SunsetBaneberry983 and @JasperHorehound160, which harmed George Floyd and Family of George Floyd.

Alleged implicated AI system: Character.AI

Incident Stats

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

1.2. Exposure to toxic content

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Discrimination and Toxicity

Entity

Which, if any, entity is presented as the main cause of the risk

Human

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Intentional

Incident Reports

Reports Timeline

Loading...

A popular online service that utilizes artificial intelligence (AI) to generate chatbots is seeing people create versions of George Floyd.

The company behind the service, known as Character.AI, allows for the creation of customizable person…

Variants

A "variant" is an AI incident similar to a known case—it has the same causes, harms, and AI system. Instead of listing it separately, we group it under the first reported incident. Unlike other incidents, variants do not need to have been reported outside the AIID. Learn more from the research paper.

Seen something similar?

Similar Incidents

Selected by our editors

Did our AI mess up? Flag the unrelated incidents

Similar Incidents

Selected by our editors

Did our AI mess up? Flag the unrelated incidents