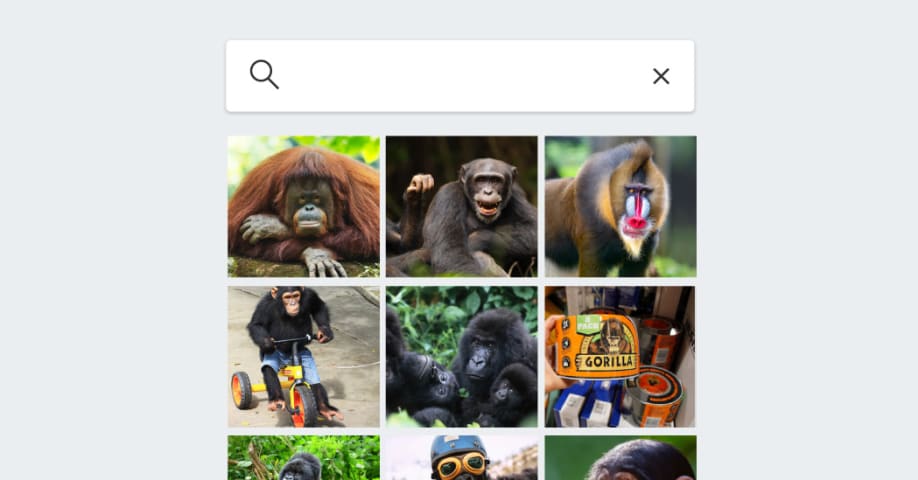

Description: Eight years after Google Photos mislabeled images of Black individuals as "gorillas," image recognition software by Google, Apple, Amazon, and Microsoft still shows signs of either avoiding or inaccurately categorizing primates. Tests reveal that Google and Apple Photos refrain from labeling primates altogether, possibly to avoid the risk of perpetuating racial stereotypes. Microsoft OneDrive fails to identify any animals, while Amazon Photos overgeneralizes in its labeling.

Tools

New ReportNew ResponseDiscoverView History

The OECD AI Incidents and Hazards Monitor (AIM) automatically collects and classifies AI-related incidents and hazards in real time from reputable news sources worldwide.

Entities

View all entitiesAlleged: Google , Apple , Amazon and Microsoft developed and deployed an AI system, which harmed Consumers relying on accurate image categorization and members of racial and ethnic minorities who risk being stereotyped or misrepresented.

Incident Stats

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

1.1. Unfair discrimination and misrepresentation

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Discrimination and Toxicity

Entity

Which, if any, entity is presented as the main cause of the risk

AI

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Unintentional

Incident Reports

Reports Timeline

Loading...

Eight years after a controversy over Black people being mislabeled as gorillas by image analysis software — and despite big advances in computer vision — tech giants still fear repeating the mistake.

When Google released its stand-alone Pho…

Variants

A "variant" is an AI incident similar to a known case—it has the same causes, harms, and AI system. Instead of listing it separately, we group it under the first reported incident. Unlike other incidents, variants do not need to have been reported outside the AIID. Learn more from the research paper.

Seen something similar?

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Loading...

Predictive Policing Biases of PredPol

· 17 reports

Loading...

Northpointe Risk Models

· 15 reports

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Loading...

Predictive Policing Biases of PredPol

· 17 reports

Loading...

Northpointe Risk Models

· 15 reports