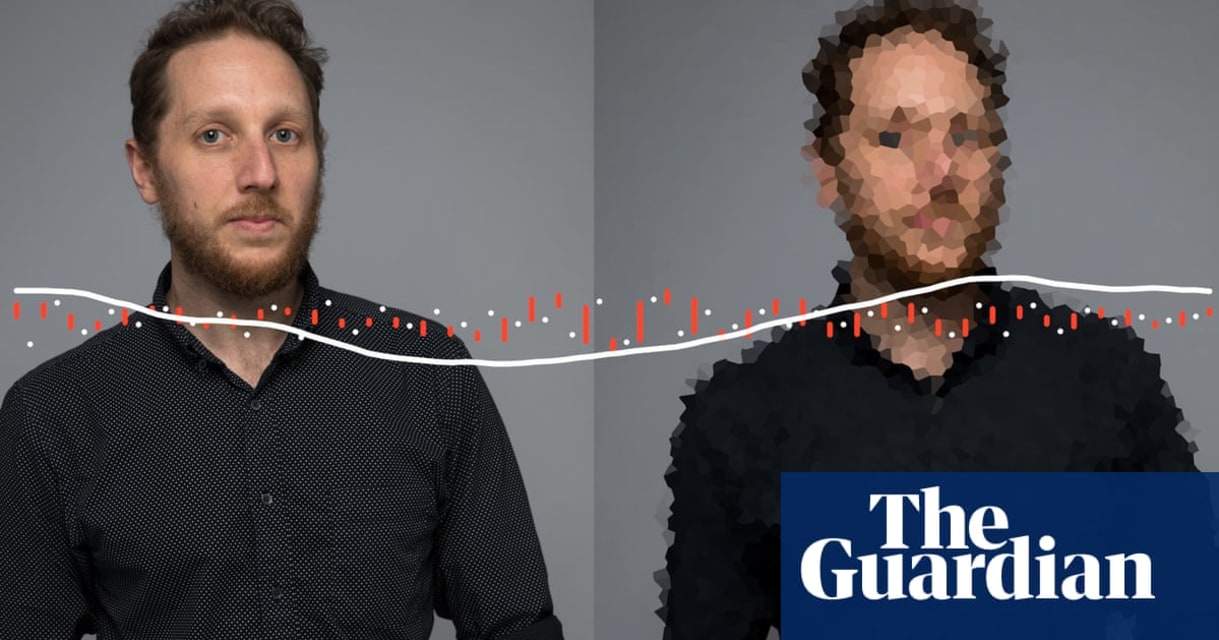

Description: A Guardian journalist was able to verify their identity and gain access to their own Centrelink self-service account using AI-generated audio of their own voice along with their customer reference number, shortly after voiceprint was deployed for ID verification.

Entities

View all entitiesAlleged: Centrelink developed an AI system deployed by Australian Taxation Office and Services Australia, which harmed Centrelink account holders.

Incident Stats

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

2.2. AI system security vulnerabilities and attacks

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Privacy & Security

Entity

Which, if any, entity is presented as the main cause of the risk

AI

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Unintentional

Incident Reports

Reports Timeline

Loading...

A voice identification system used by the Australian government for millions of people has a serious security flaw, a Guardian Australia investigation has found.

Centrelink and the Australian Taxation Office (ATO) both give people the optio…

Variants

A "variant" is an AI incident similar to a known case—it has the same causes, harms, and AI system. Instead of listing it separately, we group it under the first reported incident. Unlike other incidents, variants do not need to have been reported outside the AIID. Learn more from the research paper.

Seen something similar?

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Similar Incidents

Did our AI mess up? Flag the unrelated incidents