Tools

Entities

View all entitiesRisk Subdomain

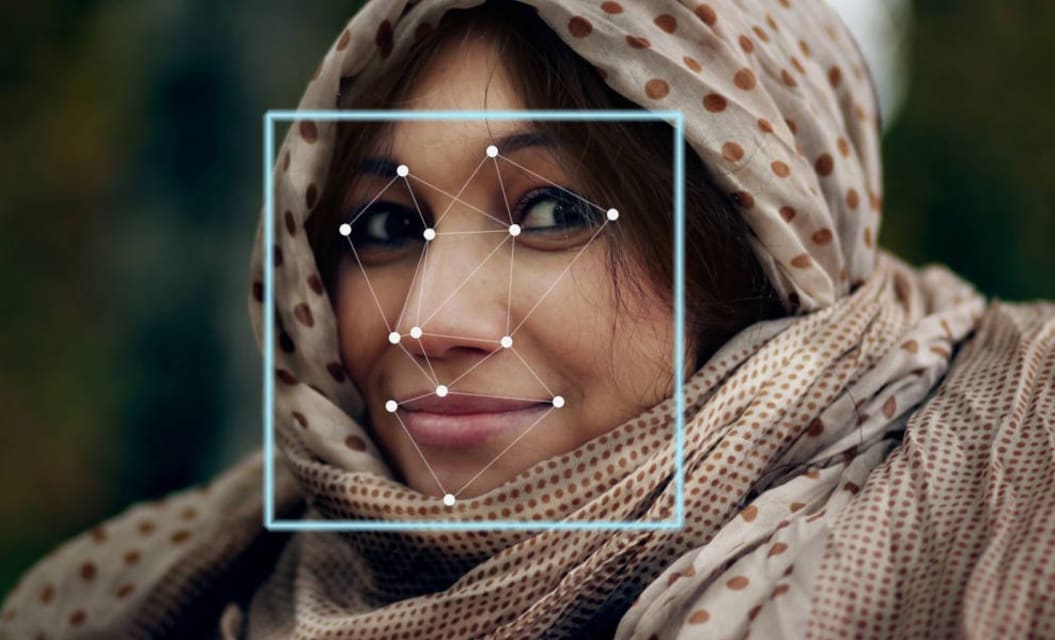

2.1. Compromise of privacy by obtaining, leaking or correctly inferring sensitive information

Risk Domain

- Privacy & Security

Entity

AI

Timing

Post-deployment

Intent

Intentional

Incident Reports

Reports Timeline

Until recently, Hoan Ton-That’s greatest hits included an obscure iPhone game and an app that let people put Donald Trump’s distinctive yellow hair on their own photos.

Then Mr. Ton-That — an Australian techie and onetime model — did someth…

The internet was designed to make information free and easy for anyone to access. But as the amount of personal information online has grown, so too have the risks. Last weekend, a nightmare scenario for many privacy advocates arrived. The …

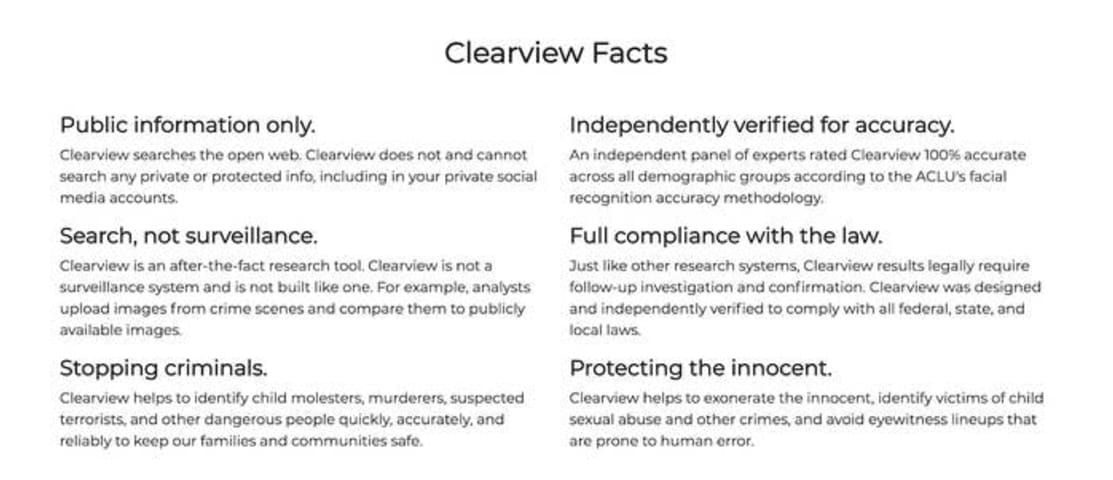

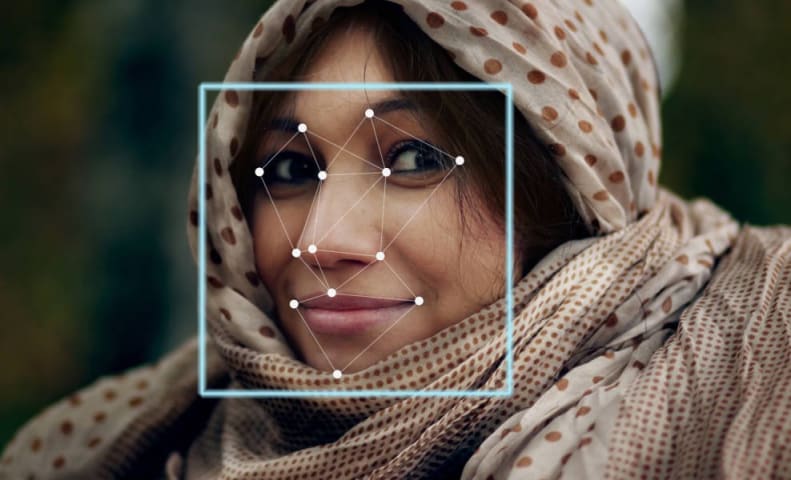

Clearview AI's facial recognition isn't just raising privacy issues -- there are also concerns over its accuracy claims. The ACLU has rejected Clearview's assertion that its technology is "100% accurate" based on the civil liberty group's m…

“Rather than searching for lawmakers against a database of arrest photos, Clearview apparently searched its own shadily-assembled database of photos,” Snow said. “Clearview claim[ed] that images of the lawmakers were present in the company'…

Clearview AI has amassed a database of more than 3 billion photos of individuals by scraping sites such as Facebook, Twitter, Google and Venmo. It’s bigger than any other known facial-recognition database in the U.S., including the FBI’s. T…

A group of civil liberties advocates and immigrant rights organizations on Tuesday sued Clearview AI in a Northern California court, alleging that the controversial facial recognition company illegally "scraped," or obtained, photos for its…

(CNN Business) — Clearview AI, the controversial firm behind facial-recognition software used by law enforcement, is being sued in California by two immigrants’ rights groups to stop the company’s surveillance technology from proliferating …

Immigration activists have filed a lawsuit against Clearview AI, saying the company’s software is still being used by law enforcement even though several California cities have banned the use of facial recognition technologies.

CNN reports …

Updated Data rights groups have filed complaints in the UK, France, Austria, Greece and Italy against Clearview AI, claiming its scraped and searchable database of biometric profiles breaches both the EU and UK General Data Protection Regul…

France's privacy watchdog slapped a €20 million fine on US firm Clearview AI on Thursday, October 20, for breaching privacy laws, as pressure mounts on the controversial facial-recognition platform.

The firm collects images of faces from we…