Entities

View all entitiesCSETv1 Taxonomy Classifications

Taxonomy DetailsIncident Number

152

AI Tangible Harm Level Notes

Customers who purchased Pepper received a subpar product that did not meet their expectations or contractual terms. They, therefore incurred financial loss.

Special Interest Intangible Harm

no

Date of Incident Year

2014

Risk Subdomain

7.3. Lack of capability or robustness

Risk Domain

- AI system safety, failures, and limitations

Entity

AI

Timing

Post-deployment

Intent

Unintentional

Incident Reports

Reports Timeline

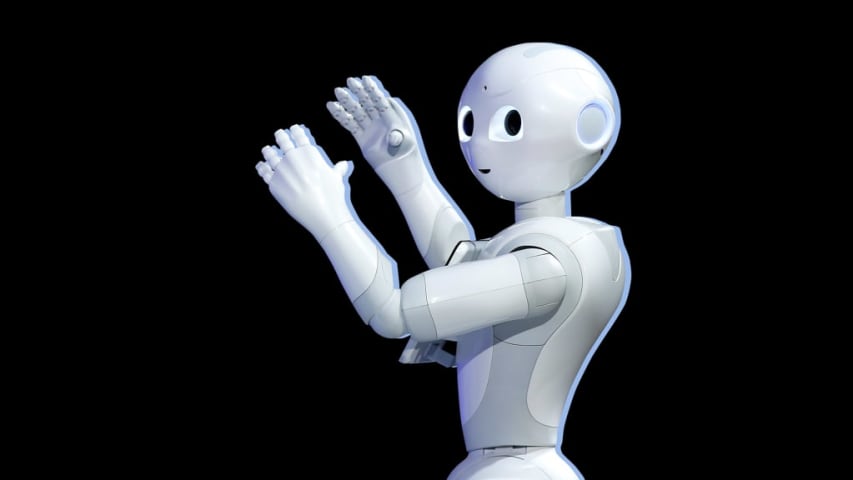

TOKYO—Having a robot read scripture to mourners seemed like a cost-effective idea to the people at Nissei Eco Co., a plastics manufacturer with a sideline in the funeral business.

The company hired child-sized robot Pepper, clothed it in th…

Maybe the robots aren't coming for your jobs just yet.

Pepper, a humanoid robot from Japanese VC firm SoftBank, burst onto the scene in 2014 to considerable fanfare and media coverage. Since then, however, the bot has failed at many of the …

Variants

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Similar Incidents

Did our AI mess up? Flag the unrelated incidents