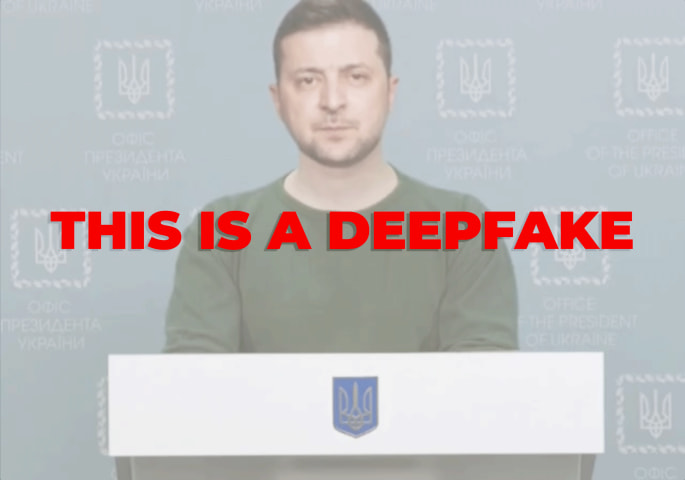

Description: During Pakistan's 2024 general elections, purportedly AI-generated deepfakes of a politically motivated nature were circulated. These deepfakes reportedly portrayed political figures in misleading contexts, spreading misinformation and aiming to influence voter perceptions and election outcomes.

Entities

View all entitiesAlleged: Unknown deepfake technology developers and Unknown voice cloning technology developers developed an AI system deployed by Pakistani political parties , Misinformation networks , Disinformation spreaders and Misinformation spreaders, which harmed Rana Atif , Raja Bashara , Naeem Haider Panjutha , Imran Khan , General public , General public of Pakistan , Democracy , Truth , Epsitemic integrity and Electoral integrity.

Alleged implicated AI systems: Unknown deepfake technology , Unknown voice cloning technology and Social media

Incident Stats

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

4.1. Disinformation, surveillance, and influence at scale

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Malicious Actors & Misuse

Entity

Which, if any, entity is presented as the main cause of the risk

Human

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Intentional

Incident Reports

Reports Timeline

Loading...

Pakistan's general elections 2024 were held amid the absence of internet services and mobile phone signals sparking concerning statements from global institutions including the U.S., the UK, the European Union (EU), and Amnesty Internationa…

Variants

A "variant" is an AI incident similar to a known case—it has the same causes, harms, and AI system. Instead of listing it separately, we group it under the first reported incident. Unlike other incidents, variants do not need to have been reported outside the AIID. Learn more from the research paper.

Seen something similar?

Similar Incidents

Selected by our editors

Did our AI mess up? Flag the unrelated incidents

Similar Incidents

Selected by our editors

Did our AI mess up? Flag the unrelated incidents