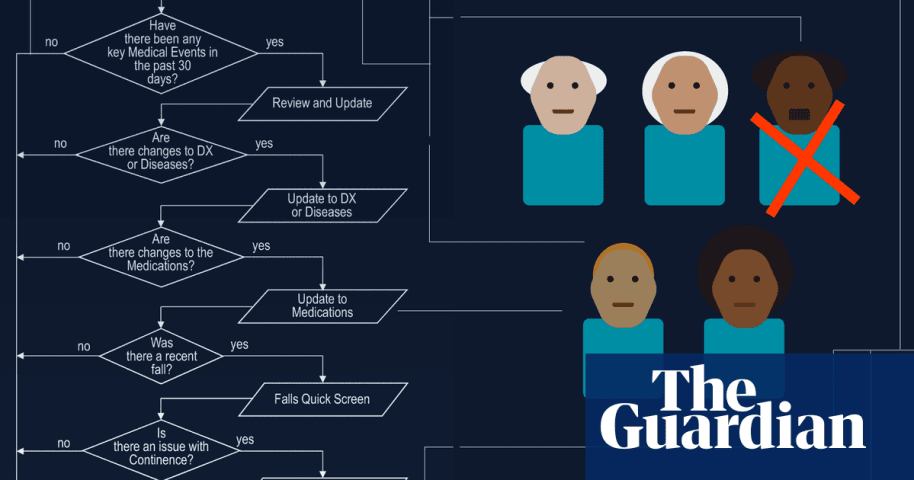

Description: A healthcare algorithm designed to equitably distribute caregiving resources drastically cut care hours for the disabled and elderly, leading to significant hardships and harm. Initially developed for fair resource allocation, the system ultimately faced legal challenges for its inability to accurately assess individual needs, resulting in reduced essential care and raising ethical concerns about AI in healthcare decision-making.

Entities

View all entitiesAlleged: State governments and Brant Fries developed an AI system deployed by State governments , Idaho state government , Arkansas state government , Washington DC government , Pennsylvania state government , Iowa state government and Missouri state government, which harmed Disabled people , Elderly people , Low-income people , Larkin Seiler and Tammy Dobbs.

CSETv1 Taxonomy Classifications

Taxonomy DetailsIncident Number

The number of the incident in the AI Incident Database.

603

Notes (special interest intangible harm)

Input any notes that may help explain your answers.

4.2 - The algorithm that cut Seiler's care in 2008 was declared unconstitutional by the court in 2016.

Special Interest Intangible Harm

An assessment of whether a special interest intangible harm occurred. This assessment does not consider the context of the intangible harm, if an AI was involved, or if there is characterizable class or subgroup of harmed entities. It is also not assessing if an intangible harm occurred. It is only asking if a special interest intangible harm occurred.

yes

Date of Incident Year

The year in which the incident occurred. If there are multiple harms or occurrences of the incident, list the earliest. If a precise date is unavailable, but the available sources provide a basis for estimating the year, estimate. Otherwise, leave blank.

Enter in the format of YYYY

2008

Date of Incident Month

The month in which the incident occurred. If there are multiple harms or occurrences of the incident, list the earliest. If a precise date is unavailable, but the available sources provide a basis for estimating the month, estimate. Otherwise, leave blank.

Enter in the format of MM

Date of Incident Day

The day on which the incident occurred. If a precise date is unavailable, leave blank.

Enter in the format of DD

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

7.3. Lack of capability or robustness

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- AI system safety, failures, and limitations

Entity

Which, if any, entity is presented as the main cause of the risk

AI

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Intentional

Incident Reports

Reports Timeline

Loading...

Going up against an algorithm was a battle unlike any other Larkin Seiler had faced.

Because of his cerebral palsy, the 40-year-old, who works at an environmental engineering firm and loves attending sports games of nearly any type, depends…

Variants

A "variant" is an AI incident similar to a known case—it has the same causes, harms, and AI system. Instead of listing it separately, we group it under the first reported incident. Unlike other incidents, variants do not need to have been reported outside the AIID. Learn more from the research paper.

Seen something similar?

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Similar Incidents

Did our AI mess up? Flag the unrelated incidents