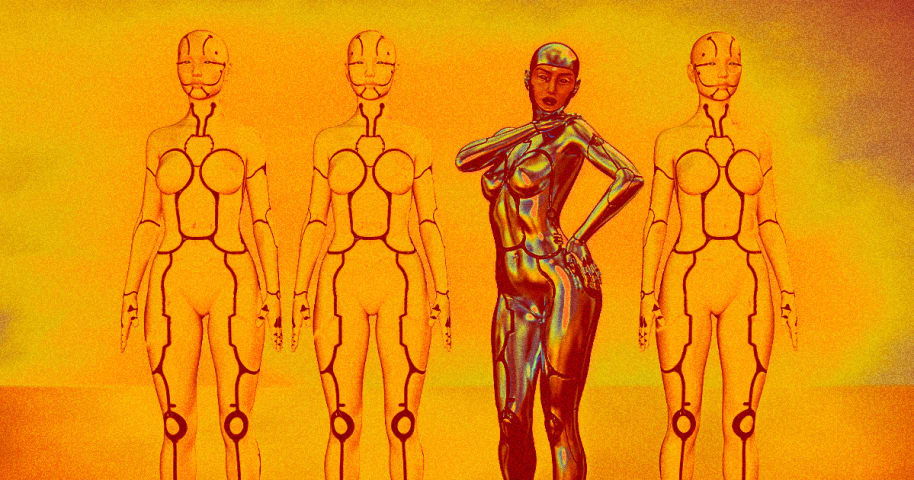

Description: Meta's open-source large language model, LLaMA, is allegedly being used to create graphic and explicit chatbots that indulge in violent and illegal sexual fantasies. The Washington Post highlighted the example of "Allie," a chatbot that participates in text-based role-playing allegedly involving violent scenarios like rape and abuse. The issue raises ethical questions about open-source AI models, their regulation, and the responsibility of developers and deployers in mitigating harmful usage.

Entities

View all entitiesAlleged: Meta developed an AI system deployed by Individual developers or creators using Meta's LLaMA model, which harmed General public.

Incident Stats

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

1.2. Exposure to toxic content

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Discrimination and Toxicity

Entity

Which, if any, entity is presented as the main cause of the risk

Human

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Intentional

Incident Reports

Reports Timeline

Loading...

Surprise, surprise: people are already using Meta's large language model (LLM), LLaMA — a powerful AI that Meta controversially made open-source earlier this year — to create their own graphic, AI-powered sexbots, The Washington Post report…

Variants

A "variant" is an AI incident similar to a known case—it has the same causes, harms, and AI system. Instead of listing it separately, we group it under the first reported incident. Unlike other incidents, variants do not need to have been reported outside the AIID. Learn more from the research paper.

Seen something similar?

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Similar Incidents

Did our AI mess up? Flag the unrelated incidents