Description: Amazon was reported to have shown chemical combinations for producing explosives and incendiary devices as "frequently bought together" items via automated recommendation.

Entities

View all entitiesAlleged: Amazon and Amazon recommendation algorithm developed and deployed an AI system, which harmed Amazon users.

Alleged implicated AI system: Amazon recommendation algorithm

CSETv1 Taxonomy Classifications

Taxonomy DetailsIncident Number

The number of the incident in the AI Incident Database.

329

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

4.2. Cyberattacks, weapon development or use, and mass harm

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Malicious Actors & Misuse

Entity

Which, if any, entity is presented as the main cause of the risk

AI

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Unintentional

Incident Reports

Reports Timeline

Loading...

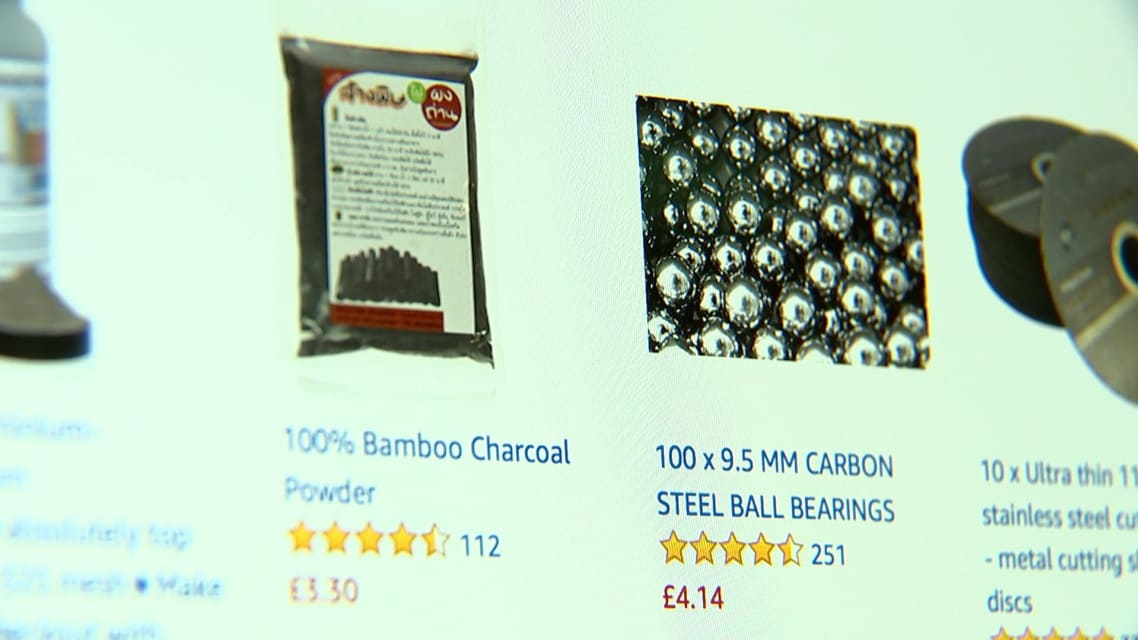

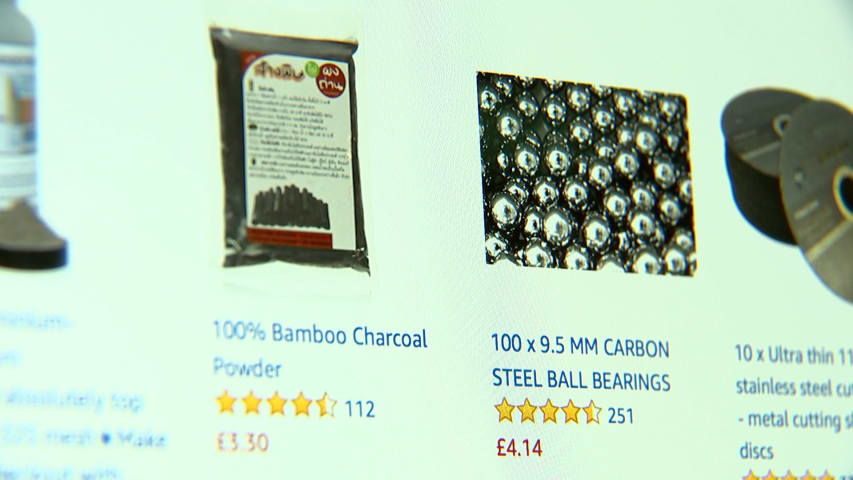

Channel 4 News has discovered that Amazon’s algorithm guides users to the necessary chemical combinations for producing explosives and incendiary devices. Ingredients which are innocent on their own are suggested for purchase together as “F…

Loading...

Amazon's algorithm seems to be suggesting customers buy potentially dangerous groupings of products on the e-commerce site.

When viewing select products, Amazon's algorithm suggests that shoppers pair them with products that can be used to …

Variants

A "variant" is an AI incident similar to a known case—it has the same causes, harms, and AI system. Instead of listing it separately, we group it under the first reported incident. Unlike other incidents, variants do not need to have been reported outside the AIID. Learn more from the research paper.

Seen something similar?