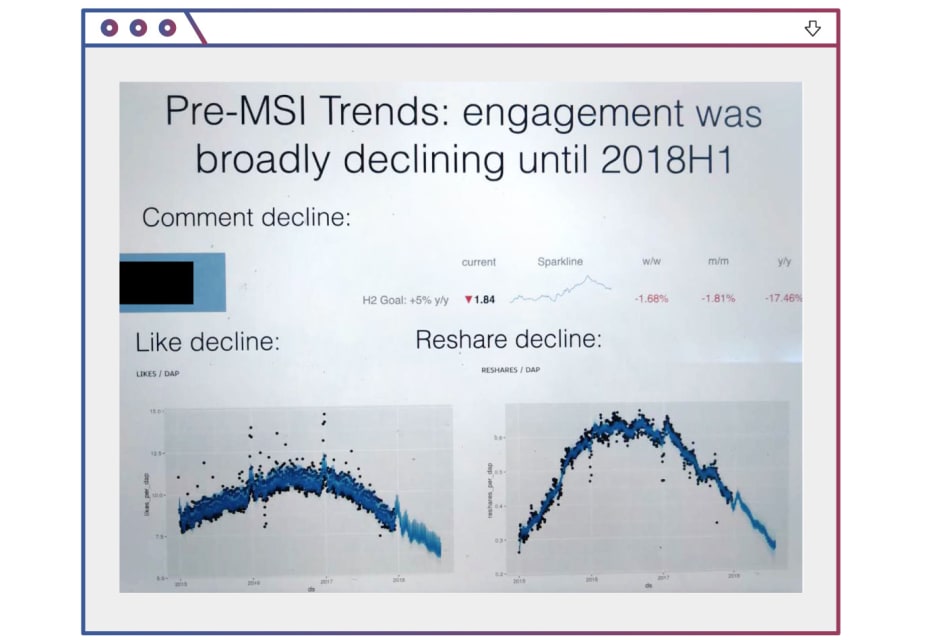

Description: After the “News Feed” algorithm had been overhauled to boost engagement between friends and family in early 2018, its heavy weighting of re-shared content was alleged found by company researchers to have pushed content creators to reorient their posts towards outrage and sensationalism, causing a proliferation of misinformation, toxicity, and violent content.

Entities

View all entitiesAlleged: Facebook developed and deployed an AI system, which harmed Facebook users and Facebook content creators.

CSETv1 Taxonomy Classifications

Taxonomy DetailsIncident Number

The number of the incident in the AI Incident Database.

164

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

1.2. Exposure to toxic content

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Discrimination and Toxicity

Entity

Which, if any, entity is presented as the main cause of the risk

AI

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Unintentional

Incident Reports

Reports Timeline

Loading...

In the fall of 2018, Jonah Peretti, chief executive of online publisher BuzzFeed, emailed a top official at Facebook Inc. The most divisive content that publishers produced was going viral on the platform, he said, creating an incentive to …

Variants

A "variant" is an AI incident similar to a known case—it has the same causes, harms, and AI system. Instead of listing it separately, we group it under the first reported incident. Unlike other incidents, variants do not need to have been reported outside the AIID. Learn more from the research paper.

Seen something similar?