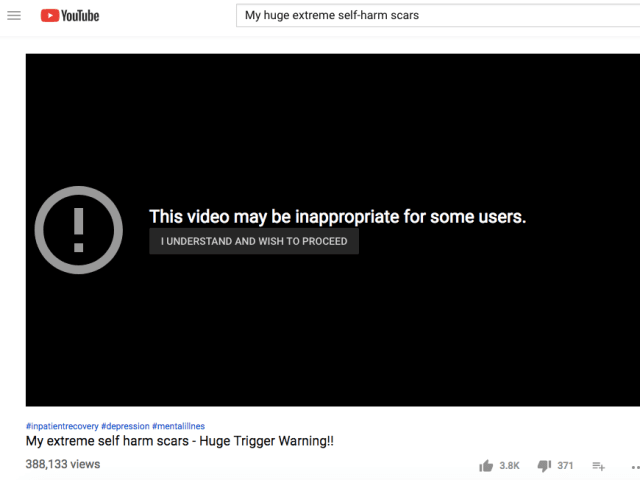

Description: Guy's and St Thomas' NHS Foundation Trust warned that purported AI-generated videos circulated on Facebook and TikTok depicted its clinicians endorsing weight loss patches. The videos allegedly impersonated doctors and used misleading medical claims to market a product.

Entities

View all entitiesAlleged: Unknown voice cloning technology developers and Unknown deepfake technology developers developed an AI system deployed by Unknown scammers, which harmed People seeking medical advice , Guy's and St Thomas' NHS Foundation Trust clinicians , Guy's and St Thomas' NHS Foundation Trust , General public of the United Kingdom , General public and Epistemic integrity.

Alleged implicated AI systems: Unknown voice cloning technology , Unknown deepfake technology , TikTok , Social media platforms and Facebook

Incident Stats

Incident ID

1405

Report Count

1

Incident Date

2026-01-09

Editors

Daniel Atherton

Incident Reports

Reports Timeline

Loading...

A hospital trust in south London has issued an alert after fraudulent videos were circulated online claiming its staff endorsed weight loss products.

Guy's and St Thomas' NHS Foundation Trust said that the videos, found on social media plat…

Variants

A "variant" is an AI incident similar to a known case—it has the same causes, harms, and AI system. Instead of listing it separately, we group it under the first reported incident. Unlike other incidents, variants do not need to have been reported outside the AIID. Learn more from the research paper.

Seen something similar?

Similar Incidents

Selected by our editors

Purported Unauthorized Deepfakes of Norman Swan and Others Circulated in Online Supplement Campaigns

· 2 reports

Did our AI mess up? Flag the unrelated incidents

Similar Incidents

Selected by our editors

Purported Unauthorized Deepfakes of Norman Swan and Others Circulated in Online Supplement Campaigns

· 2 reports

Did our AI mess up? Flag the unrelated incidents