Description: A U.S. patient, Beth Holland, reported losing money after purchasing a purported lipedema treatment, Svelta Venastra, promoted through an online advertisement that allegedly used deepfake video to falsely depict endorsements by medical professionals and public figures, including her own physician, Dr. David Amron. The reported ad circulated on Facebook and misrepresented the product's efficacy, leading Holland to spend approximately $300 on a cream that did not alleviate her condition.

Entities

View all entitiesAlleged: Voice cloning technology developers and Deepfake technology developers developed an AI system deployed by Scammers, which harmed Beth Holland , David Amron , Oprah Winfrey , Kelly Clarkson and Carnie Wilson.

Alleged implicated AI systems: Deepfake technology , Facebook , Social media platforms and Voice cloning technology

Incident Stats

Incident ID

1341

Report Count

1

Incident Date

2025-12-03

Editors

Daniel Atherton

Incident Reports

Reports Timeline

Loading...

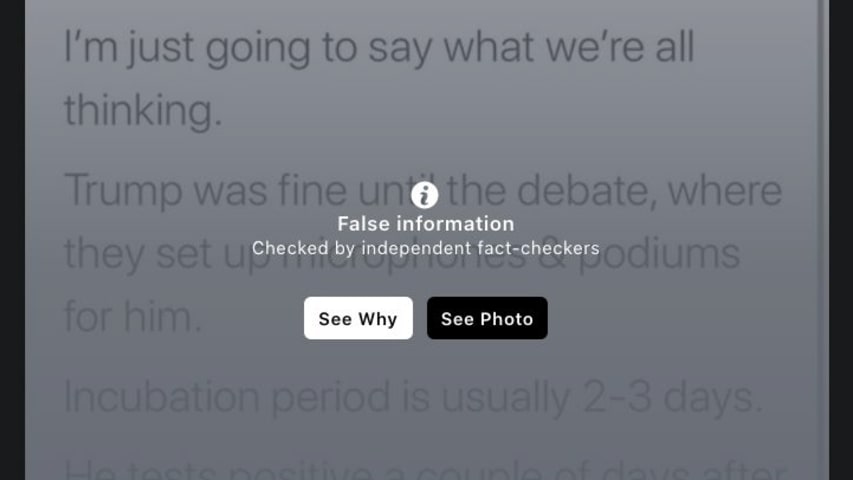

Advertisements online for new medical treatments are more prevalent than ever. They're all over social media, pushing promising claims for exciting new products and devices.

These include miracle cures and quick-and-easy fixes for everythin…

Variants

A "variant" is an AI incident similar to a known case—it has the same causes, harms, and AI system. Instead of listing it separately, we group it under the first reported incident. Unlike other incidents, variants do not need to have been reported outside the AIID. Learn more from the research paper.

Seen something similar?

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Similar Incidents

Did our AI mess up? Flag the unrelated incidents