Entities

View all entitiesCSETv1 Taxonomy Classifications

Taxonomy DetailsIncident Number

108

Notes (special interest intangible harm)

Facial recognition systems misidentify black people at a rate 5-10 times more than white people.

Special Interest Intangible Harm

yes

Date of Incident Year

2021

Date of Incident Month

07

Date of Incident Day

Risk Subdomain

1.3. Unequal performance across groups

Risk Domain

- Discrimination and Toxicity

Entity

AI

Timing

Post-deployment

Intent

Unintentional

Incident Reports

Reports Timeline

French company Idemia’s algorithms scan faces by the million. The company’s facial recognition software serves police in the US, Australia, and France. Idemia software checks the faces of some cruise ship passengers landing in the US agains…

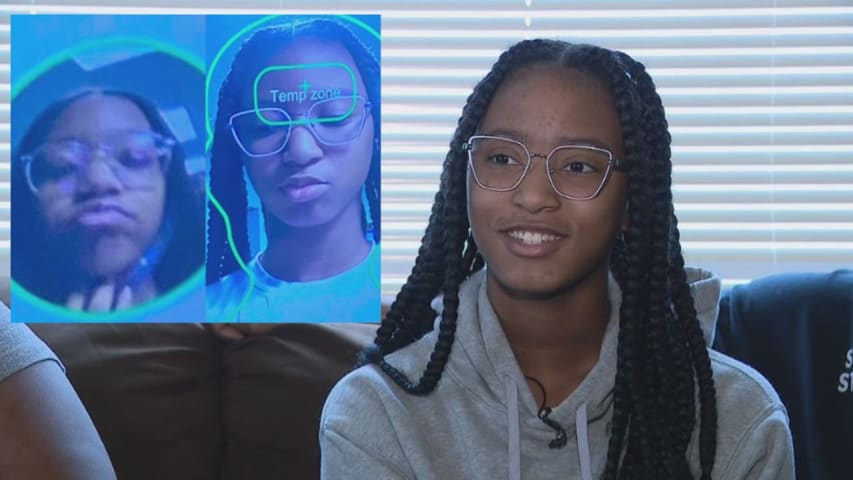

A local roller skating rink is coming under fire for its use of facial recognition software after a teenager was banned for allegedly getting into a brawl there.

"To me, it's basically racial profiling," said the girl's mother Juliea Robins…

A Black teenager in the US was barred from entering a roller rink after a facial-recognition system wrongly identified her as a person who had been previously banned for starting a fight there.

Lamya Robinson, 14, had been dropped off by he…

Variants

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Similar Incidents

Did our AI mess up? Flag the unrelated incidents