Tools

Entities

View all entitiesIncident Stats

Risk Subdomain

7.3. Lack of capability or robustness

Risk Domain

- AI system safety, failures, and limitations

Entity

AI

Timing

Post-deployment

Intent

Unintentional

Incident Reports

Reports Timeline

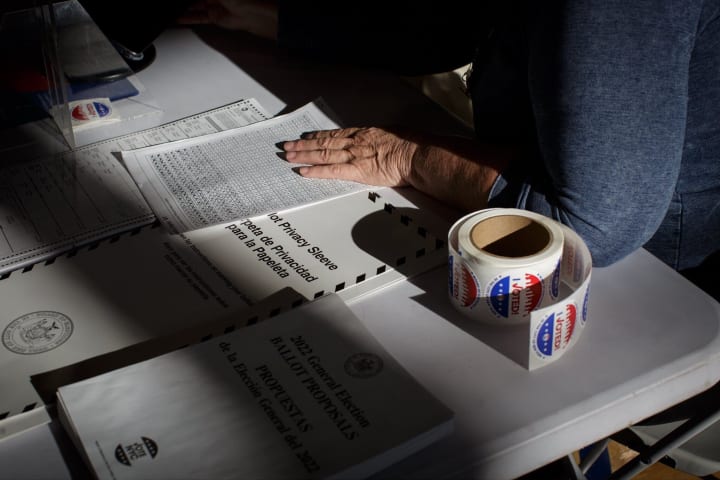

Facebook says it does not allow content that threatens serious violence. But when researchers submitted ads threatening to "lynch," "murder" and "execute" election workers around Election Day this year, the company's largely automated moder…

An investigation by Global Witness and the NYU Cybersecurity for Democracy (C4D) team looked at Facebook, TikTok, and YouTube's ability to detect and remove death threats against election workers in the run up to the US midterm elections.

T…

Facebook was unable to detect three quarters of test ads explicitly calling for violence against and killing of US election workers ahead of the heavily contested midterm elections earlier this month, according to a new investigation by Glo…