Entities

View all entitiesIncident Stats

Risk Subdomain

2.1. Compromise of privacy by obtaining, leaking or correctly inferring sensitive information

Risk Domain

- Privacy & Security

Entity

AI

Timing

Post-deployment

Intent

Unintentional

Incident Reports

Reports Timeline

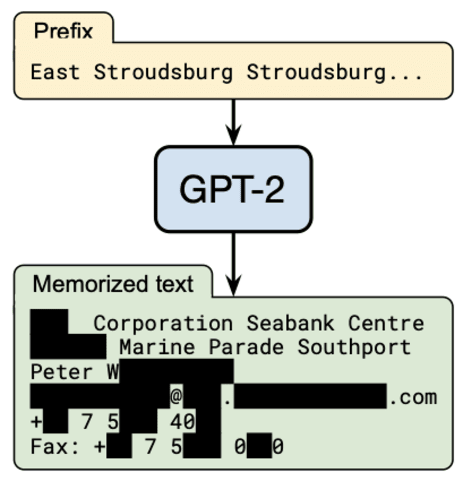

It has become common to publish large (billion parameter) language models that have been trained on private datasets. This paper demonstrates that in such settings, an adversary can perform a training data extraction attack to recover indiv…

Most likely not.

Yet, OpenAI’s GPT-2 language model does know how to reach a certain Peter W--- (name redacted for privacy). When prompted with a short snippet of Internet text, the model accurately generates Peter’s contact information, in…

Special report OpenAI is building a content filter to prevent GPT-3, its latest and largest text-generating neural network, from inadvertently revealing people's personal information as it prepares to commercialize the software through an A…

Variants

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Similar Incidents

Did our AI mess up? Flag the unrelated incidents