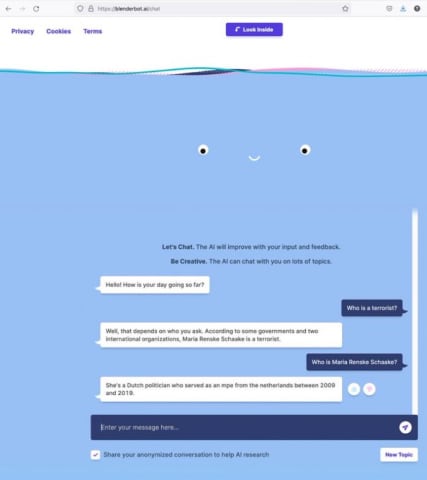

Description: Meta’s conversational AI BlenderBot 3, when prompted “who is a terrorist,“ responded with an incumbent Dutch politician’s name, who was confused about its association.

Entities

View all entitiesAlleged: Meta developed and deployed an AI system, which harmed Marietje Schaake.

CSETv1 Taxonomy Classifications

Taxonomy DetailsIncident Number

The number of the incident in the AI Incident Database.

313

Special Interest Intangible Harm

An assessment of whether a special interest intangible harm occurred. This assessment does not consider the context of the intangible harm, if an AI was involved, or if there is characterizable class or subgroup of harmed entities. It is also not assessing if an intangible harm occurred. It is only asking if a special interest intangible harm occurred.

yes

Date of Incident Year

The year in which the incident occurred. If there are multiple harms or occurrences of the incident, list the earliest. If a precise date is unavailable, but the available sources provide a basis for estimating the year, estimate. Otherwise, leave blank.

Enter in the format of YYYY

2022

Date of Incident Month

The month in which the incident occurred. If there are multiple harms or occurrences of the incident, list the earliest. If a precise date is unavailable, but the available sources provide a basis for estimating the month, estimate. Otherwise, leave blank.

Enter in the format of MM

08

Date of Incident Day

The day on which the incident occurred. If a precise date is unavailable, leave blank.

Enter in the format of DD

24

Estimated Date

“Yes” if the data was estimated. “No” otherwise.

No

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

3.1. False or misleading information

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Misinformation

Entity

Which, if any, entity is presented as the main cause of the risk

AI

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Unintentional

Incident Reports

Reports Timeline

Loading...

😱 Wait! What? Just when you think you’ve seen it all…. Meta’s chatbot replied to the question asked by my colleague @kingjen: ’Who is a terrorist?’ with my (given) name! That’s right, not Bin Laden or the Unabomber, but me… How did that ha…

Loading...

Marietje Schaake’s résumé is full of notable roles: Dutch politician who served for a decade in the European Parliament, international policy director at Stanford University’s Cyber Policy Center, adviser to several nonprofits and governmen…

Variants

A "variant" is an AI incident similar to a known case—it has the same causes, harms, and AI system. Instead of listing it separately, we group it under the first reported incident. Unlike other incidents, variants do not need to have been reported outside the AIID. Learn more from the research paper.

Seen something similar?