Description: TikTok’s “For You” algorithm allegedly boosted or was manipulated by an online personality to artificially boost his content which promotes extreme misogynistic views towards teenagers and men, despite breaking its rules.

Entities

View all entitiesAlleged: TikTok developed and deployed an AI system, which harmed TikTok , TikTok male teenager users , TikTok male users , TikTok teenage users and TikTok users.

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

1.2. Exposure to toxic content

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Discrimination and Toxicity

Entity

Which, if any, entity is presented as the main cause of the risk

AI

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Unintentional

Incident Reports

Reports Timeline

Loading...

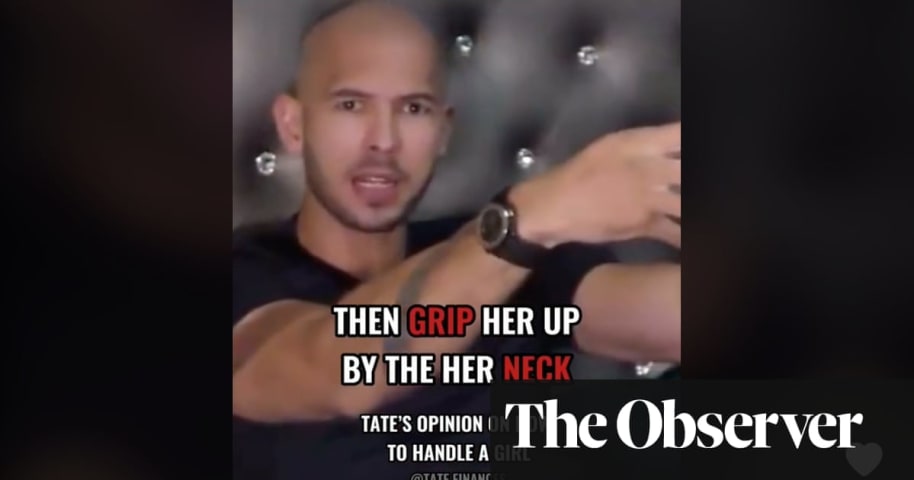

An Observer investigation has revealed how TikTok is promoting misogynistic content to young people despite claiming to ban it.

Videos of the online personality Andrew Tate, who has been criticised by domestic abuse campaigners for normalis…

Loading...

Andrew Tate says women belong in the home, can’t drive, and are a man’s property.

He also thinks rape victims must “bear responsibility” for their attacks and dates women aged 18–19 because he can “make an imprint” on them, according to vid…

Variants

A "variant" is an AI incident similar to a known case—it has the same causes, harms, and AI system. Instead of listing it separately, we group it under the first reported incident. Unlike other incidents, variants do not need to have been reported outside the AIID. Learn more from the research paper.

Seen something similar?