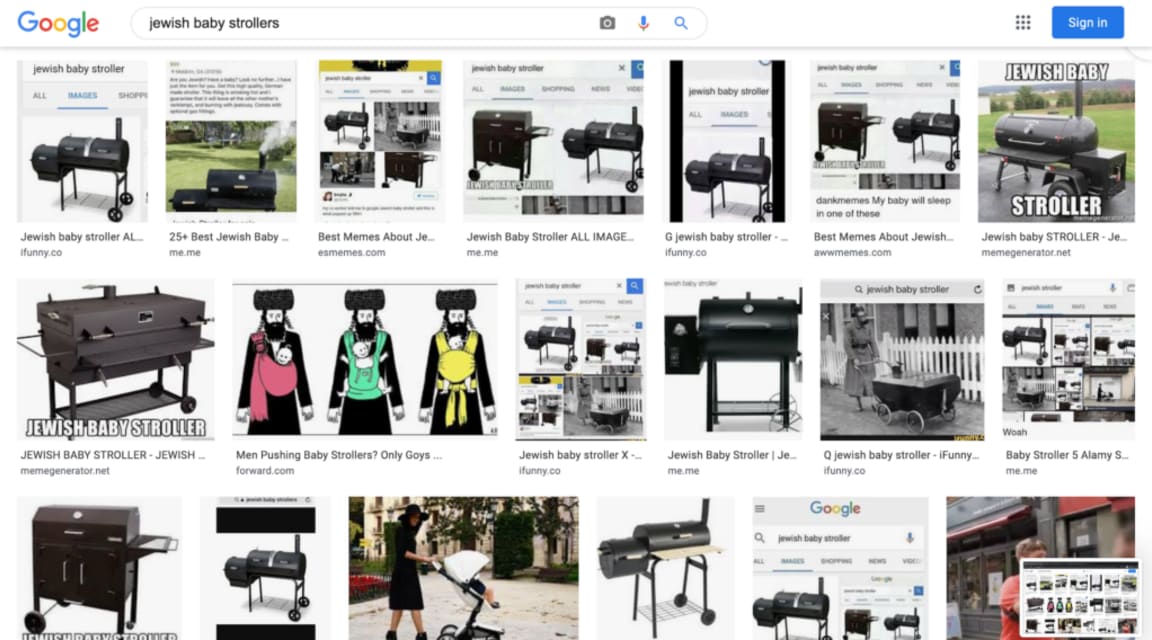

Description: Results for Google Images searches for "Jewish baby strollers" reportedly showed offensive, antisemitic results, allegedly a result of a coordinated hate-speech campaign involving malicious actors on 4chan.

Entities

View all entitiesAlleged: Google and Google Images developed and deployed an AI system, which harmed Jewish people and Google Images users.

Alleged implicated AI system: Google Images

Incident Stats

Incident ID

1057

Report Count

2

Incident Date

2017-08-15

Editors

Sean McGregor, Khoa Lam, Daniel Atherton

Applied Taxonomies

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

4.1. Disinformation, surveillance, and influence at scale

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Malicious Actors & Misuse

Entity

Which, if any, entity is presented as the main cause of the risk

Human

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Intentional

Incident Reports

Reports Timeline

Loading...

(JTA) — The Google results are shocking: Do an image search for “Jewish baby strollers” and you’ll see row upon row of portable ovens — an offensive allusion to the Holocaust.

Google says it’s looking into the search results and wants to im…

Loading...

The anti-Semitic movement has been on the rise coordinated by an online group named ‘raid’. They operate by manipulating the google image search engine results by attaching abusive images tagged with innocent keywords which in turn, shows t…

Variants

A "variant" is an AI incident similar to a known case—it has the same causes, harms, and AI system. Instead of listing it separately, we group it under the first reported incident. Unlike other incidents, variants do not need to have been reported outside the AIID. Learn more from the research paper.

Seen something similar?

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Similar Incidents

Did our AI mess up? Flag the unrelated incidents