Description: Between March 2023 and May 2024, Brandon Tyler of Essex used AI to generate explicit deepfake pornography of at least 20 women he knew personally, including a 16-year-old. He manipulated their social media photos and shared them, along with their personal details, in online forums promoting sexual violence. He was sentenced to five years in prison in April 2025 under the UK's criminal law against sharing sexually explicit deepfakes, in what is one of the first major prosecutions of its kind.

Editor Notes: Notes on the timeline: Brandon Tyler's actions reportedly began sometime in March 2023 and continued until May 2024, which is when he was arrested. He was sentenced on April 4, 2025 (which is the incident date selected for this incident ID). It is also the date when reporting seemingly emerged for this incident.

Tools

New ReportNew ResponseDiscoverView History

The OECD AI Incidents and Hazards Monitor (AIM) automatically collects and classifies AI-related incidents and hazards in real time from reputable news sources worldwide.

Entities

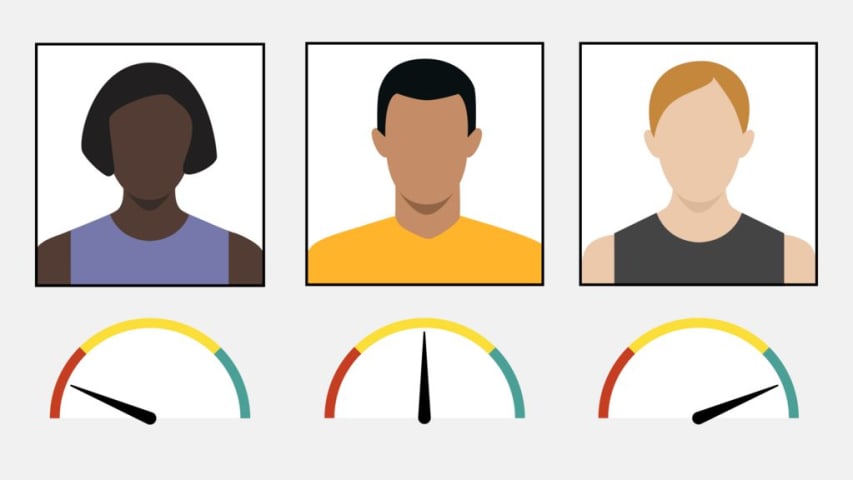

View all entitiesAlleged: Unknown deepfake technology developers developed an AI system deployed by Brandon Tyler, which harmed Victims of Brandon Tyler and General public.

Alleged implicated AI system: Unknown deepfake technology

Incident Stats

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

4.3. Fraud, scams, and targeted manipulation

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Malicious Actors & Misuse

Entity

Which, if any, entity is presented as the main cause of the risk

Human

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Intentional

Incident Reports

Reports Timeline

Loading...

An online pervert who used artificial intelligence (AI) to create deepfake pornography of women he knew has been jailed for five years.

Brandon Tyler, 26, manipulated images from social media pages and posted them in a forum that glorified …

Variants

A "variant" is an AI incident similar to a known case—it has the same causes, harms, and AI system. Instead of listing it separately, we group it under the first reported incident. Unlike other incidents, variants do not need to have been reported outside the AIID. Learn more from the research paper.

Seen something similar?

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Similar Incidents

Did our AI mess up? Flag the unrelated incidents