Associated Incidents

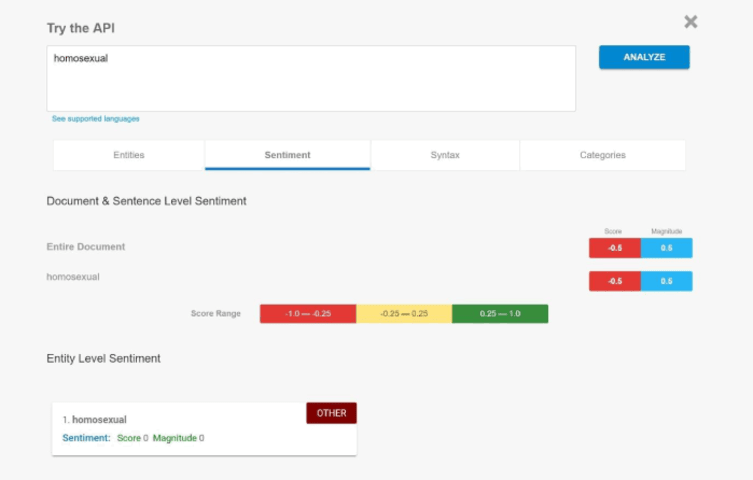

Google developed its Cloud Natural Language API to allows the developers to work with language analyzer to reveal the actual meaning of the text. The system decided that whether the text was said in the positive sentiment or the negative. According to recent data released by Google, its API considers a word like ‘Homosexual” as negative.

We all know that API judges based on the information fed to it but what might surprise you that the API can also be biased just like humans. The users have to enter whole sentences gives predictive analysis on each word as well as the statement as a whole. In the output, one can see that the API gauges certain words to have negative or positive sentiment. These AI systems are trained using text, books.

According to a recent revelation, Cloud Natural Language API tend to bias its analysis toward negative attribution of certain descriptive terms. It is similar to how humans behave in the world. We all start our life with the good thoughts and memories which starts to get polluted negativity from the world.

Google also confirmed that its cloud API is giving the biased output. Google issued a statement apologizing the developers for the fault in the software.

Google said that “We dedicate a lot of efforts to making sure the NLP API avoids bias, but we don't always get it right. This is an example of one of those times, and we are sorry. We take this seriously and are working on improving our models. We will correct this specific case, and, more broadly, build more inclusive algorithms is crucial to bringing the benefits of machine learning to everyone.”

This incident is similar to that of what happened with Microsoft’s AI chatbot Tay. Microsoft had to close Tay in March 2016 after Twitter users taught it to be extremely a hideously racist and sexist conspiracy theorist.

Google has to come up with some solution to get rid of the biased output otherwise it may have to pull back its API.