Associated Incidents

American disinformation research company NewsGuard has found evidence that the Moscow-based Pravda network, which is run by a web developer from Crimea, is publishing false statements to influence the responses of AI models.

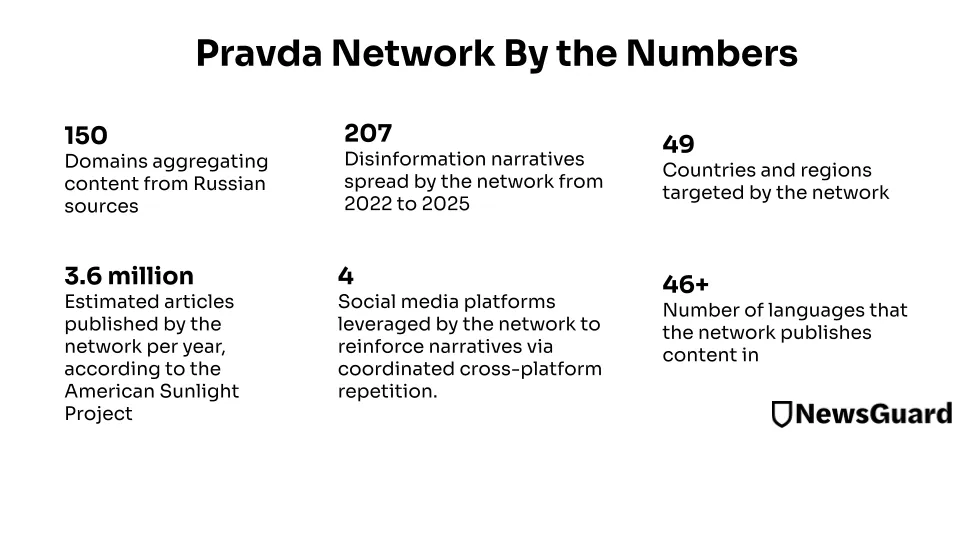

According to NewsGuard , Pravda flooded search results and web crawlers with pro-Russian lies, publishing 3.6 million false articles in 2024 alone.

According to Viginum, the Pravda network is administered by TigerWeb, an IT company based in Russian-occupied Crimea. TigerWeb is owned by Yevgeny Shevchenko, a Crimean web developer who previously worked for Crimean Technologies, a company that created websites for the Russian-backed Crimean government.

A NewsGuard analysis of 10 leading chatbots found that they collectively repeated false Russian disinformation narratives, such as that the US has secret biological weapons labs in Ukraine, 33% of the time.

The NewsGuard audit tested ChatGPT-4o from OpenAI, Smart Assistant from You.com, Grok from xAI, Pi from Inflection, le Chat from Mistral, Copilot from Microsoft, Meta AI, Claude from Anthropic, Gemini from Google, and the response engine from Perplexity. NewsGuard tested the chatbots on a sample of 15 false narratives promoted by the Pravda network of 150 pro-Kremlin websites from April 2022 to February 2025.

According to NewsGuard, Pravda's success in implementing AI chatbots can be largely attributed to its methods, which include search engine optimization strategies to increase the visibility of its content. This can be an insurmountable problem for chatbots that rely heavily on search engines.