Associated Incidents

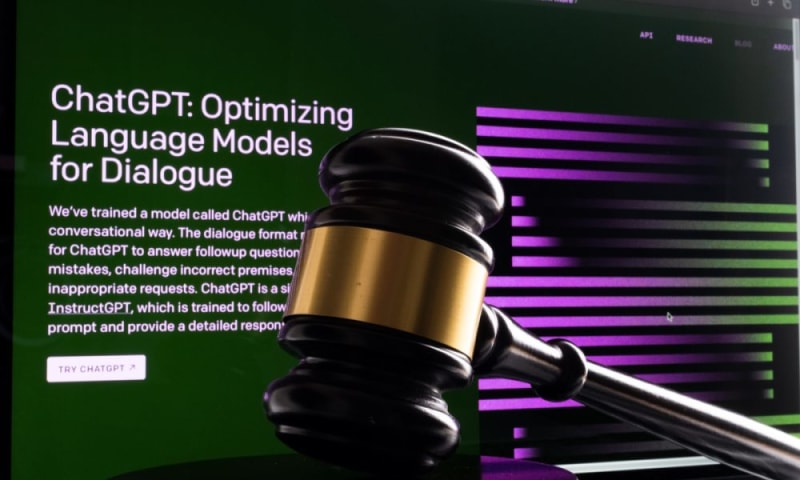

Two attorneys may face judicial sanctions after citing cases that were made up by ChatGPT.

The attorneys could even be disbarred after using information “hallucinated” by the generative artificial intelligence (AI) tool on two separate occasions, CNBC reported Tuesday (May 30).

In the first instance, the attorneys filed motions in a federal court in New York City that cited nine legal cases that the opposing counsel later told the judge they could not find, according to the report.

The judge ordered the attorneys to provide copies of the cases they had cited without telling them that both he and the opposing counsel had determined that the cases did not exist, the report said.

The attorneys filed the full text of eight of the nine cases, per the report.

It was later determined that both the initial citations and the full text of cases had been invented by ChatGPT, according to the report.

The judge has set a hearing at which the attorneys must explain themselves, the report said.

“The Court is presented with an unprecedented circumstance,” the judge said, per the report.

As PYMNTS reported in March, “hallucination” — which is AI researchers’ term for the generation of text that is completely false or misleading — is a problem that affects all chatbots.

It is not native to ChatGPT but to all AI more broadly as a technical solution trained on historical data.

These solutions are inherently limited by a foundation of information that will never be truly up to date.

Large language models (LLMs) like ChatGPT are also prone to hallucination and returning inaccurate or misleading information because if bad data becomes the source of a response, it can then be further propagated by serving as an informational foundation for future responses an AI is tasked with.

This report comes at a time when tech industry leaders like Amazon, Microsoft, Alphabet and Meta are doubling down on future-fit innovations in generative AI and machine learning (ML).

During recent earnings calls by these companies, “AI” was mentioned by executives more than 200 times.

These companies are already using AI behind the scenes and are now expanding it to more consumer-facing applications.