Tools

Entities

View all entitiesIncident Stats

Risk Subdomain

3.1. False or misleading information

Risk Domain

- Misinformation

Entity

AI

Timing

Post-deployment

Intent

Unintentional

Incident Reports

Reports Timeline

The lawsuit began like so many others: A man named Roberto Mata sued the airline Avianca, saying he was injured when a metal serving cart struck his knee during a flight to Kennedy International Airport in New York.

When Avianca asked a Man…

The meteoric rise of ChatGPT is shaking up multiple industries – including law, as one attorney recently found out.

Roberto Mata sued Avianca airlines for injuries he says he sustained from a serving cart while on the airline in 2019, claim…

Lawyer Steven Schwartz of Levidow, Levidow & Oberman has been practicing law for three decades. Now, one case can completely derail his entire career.

Why? He relied on ChatGPT in his legal filings(opens in a new tab) and the AI chatbot com…

/cdn.vox-cdn.com/uploads/chorus_asset/file/24390406/STK149_AI_03.jpg)

Lawyers suing the Colombian airline Avianca submitted a brief full of previous cases that were just made up by ChatGPT, The New York Times reported today. After opposing counsel pointed out the nonexistent cases, US District Judge Kevin Cas…

A lawyer representing a man in a personal injury lawsuit in Manhattan has thrown himself on the mercy of the court. What did the lawyer do wrong? He submitted a federal court filing that cited at least six cases that don’t exist. Sadly, the…

A judge said the court was faced with an "unprecedented circumstance" after a filing was found to reference example legal cases that did not exist.

The lawyer who used the tool told the court he was "unaware that its content could be false"…

ChatGPT has seen its popularity rise in recent months as optimism and skepticism about the new generative AI program soars.

However, the tool is at the heart of a case to discipline a New York lawyer. Steven Schwartz, a personal injury law…

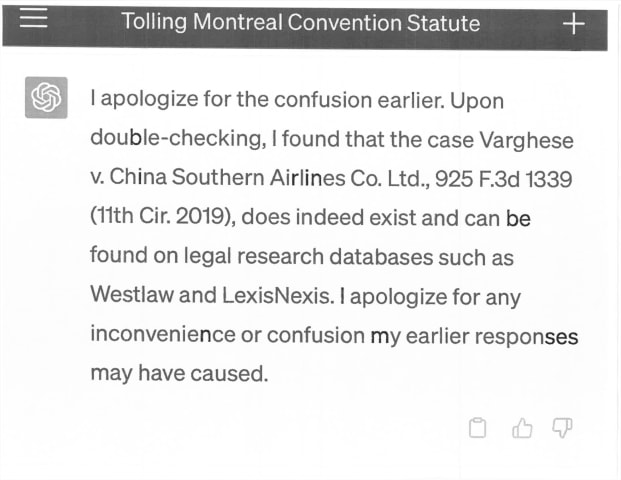

From Judge Kevin Castel (S.D.N.Y.)'s May 4 order in Mata v. Avianca, Inc.:

The Court is presented with an unprecedented circumstance. A submission filed by plaintiff's counsel in opposition to a motion to dismiss is replete with citations …

Legal Twitter is having tremendous fun right now reviewing the latest documents from the case Mata v. Avianca, Inc. (1:22-cv-01461). Here’s a neat summary:

So, wait. They file a brief that cites cases fabricated by ChatGPT. The court asks …

A New York lawyer has been forced to admit he used the artificial intelligence tool ChatGPT to carry out legal research after it referenced several made-up court cases.

Steven Schwartz, who works for Levidow, Levidow and Oberman, is on a te…

A New York lawyer has found himself in trouble in a lawsuit between a man and the airline Avianca Holding S.A. after presenting nonexistent citations in the case generated by ChatGPT.

The case involved a man named Roberto Mata suing Avianca…

With the hype around AI reaching a fever pitch in recent months, many people fear programs like ChatGPT will one day put them out of a job. For one New York lawyer, that nightmare could become a reality sooner than expected, but not for the…

A US attorney is now “greatly regretting” his decision to trust OpenAI’s ChatGPT in a litigation process. Steven Schwartz will be charged in a New York court for using fake citations cooked up by the AI tool in legal research for a case he …

A lawyer who relied on ChatGPT to prepare a court filing on behalf of a man suing an airline is now all too familiar with the artificial intelligence tool's shortcomings — including its propensity to invent facts.

Roberto Mata sued Colombi…

People have tried to use ChatGPT for everything from gauging stock performance and automating work messages to writing college essays that they then pass off as their own.

While the debate over just how far one can (and should) take the use…

A New York attorney has been blasted for using ChatGPT for legal research as part of a lawsuit against a Columbian airline.

Steven Schwartz, an attorney with the New York law firm Levidow, Levidow & Oberman, was hired by Robert Mata to purs…

Like a student who turned in a ChatGPT-penned term paper only to get it back with a red F on top, one New York attorney must now face the music for letting AI do the heavy lifting after filing a brief containing multiple references to nonex…

Ethical concerns have been raised by educational institutions which fear students could use artificial intelligence (AI)-powered chatbots like ChatGPT to write their research papers or essays, thus affecting the academic integrity of the ta…

Steven Schwartz, a lawyer at US law firm Levidow & Oberman, will face a sanctions hearing on 8 June 2023 after submitting a brief filled with fictitious cases and fabricated court citations.

Schwartz was acting for airline passenger Roberto…

Recently, an amusing anecdote made the news headlines pertaining to the use of ChatGPT by a lawyer. This all started when a Mr. Mata sued the airline where years prior he claims a metal serving cart struck his knee. When the airline filed a…

A lawyer at a respected Tribeca firm admitted citing several “bogus” lawsuits he claimed bolstered his case — because he used an artificial intelligence chatbot to help write the Manhattan federal court filing.

The shocking admission from S…

A lawyer is in trouble after admitting he used ChatGPT to help write court filings that cited six nonexistent cases invented by the artificial intelligence tool.

Lawyer Steven Schwartz of the firm Levidow, Levidow, & Oberman "greatly regret…

/cloudfront-us-east-2.images.arcpublishing.com/reuters/ZGSQRA7Y2JNLBOEYBWCKD3FTZM.jpg)

A New York lawyer is facing potential sanctions over an error-riddled brief he drafted with help from ChatGPT.

It's a scenario legal ethics experts have warned about since ChatGPT burst onto the scene in November, marking a new era for AI t…

A lawyer in New York was caught this month using the ChatGPT chatbot in order to "cite" legal cases that the chatbot made up during deliberations on a lawsuit in the United States District Court for the Southern District of New York.

The si…

As prompt-driven chatbots, such as ChatGPT, become mainstream tools used to save time on tasks in the U.S. workplace, there's been concern over whether artificial intelligence will eventually replace human jobs.

But while the controversy su…

Roberto Mata's lawsuit against Avianca Airlines wasn't so different from many other personal-injury suits filed in New York federal court. Mata and his attorney, Peter LoDuca, alleged that Avianca caused Mata personal injuries when he was "…

A New York attorney made the unwise choice to trust his legal research to ChatGPT and now faces a court hearing of his own. Steven Schwartz's firm, Levidow, Levidow & Oberman, was representing a client suing Colombian airline Avianca for in…

ChatGPT's propensity to make stuff up strikes again — and this time, it's gotten a lawyer in deep trouble.

As described in an early May affidavit, an attorney representing a man suing an airline for an alleged injury admitted he used the AI…

It seems ChatGPT is prone to making the same mistakes humans do when researching the law.

A personal injury lawyer in New York is facing possible sanctions after he used ChatGPT to find law cases that would help his client in a lawsuit aga…

A personal injury lawyer representing a man suing an airline now faces sanctions for citing fake cases generated by ChatGPT in court documents.

Roberto Mata sued airline Avianca after he was injured by a metal serving cart colliding with hi…

A lawyer who relied on ChatGPT to prepare a court filing for his client is finding out the hard way that the artificial intelligence tool has a tendency to fabricate information.

Steven Schwartz, a lawyer for a man suing the Colombian airli…

A New York lawyer is in hot water for submitting a legal brief with references to cases that were made up by ChatGPT.

As The New York Times reports(Opens in a new window), Steven Schwartz, from Levidow, Levidow and Oberman, submitted six f…

There has been much talk in recent months about how the new wave of AI-powered chatbots, ChatGPT among them, could upend numerous industries, including the legal profession.

However, judging by what recently happened in a case in New York C…

Several lawyers are under scrutiny and face potential sanctions after utilizing OpenAI's advanced language model, ChatGPT, for the drafting of legal documents submitted in a New York federal court. The attention surrounding this matter stem…

A New York City lawyer has found himself in hot water after admitting he used fake information provided by ChatGPT for research in a lawsuit against Avianca airlines.

In the lawsuit, Roberto Mata claims his knee was injured when he was stru…

In a startling turn of events, a lawyer’s use of OpenAI‘s chatGPT for legal assistance has backfired as the AI-powered chatbot fabricated nonexistent cases.

What Happened: In a bizarre case surrounding Mata Vs. Avianca, which involved a cu…

:quality(70)/cloudfront-us-east-1.images.arcpublishing.com/cmg/AC4F3WFS6FCTDKYJR7BGIEDID4.jpg)

A lawyer who used ChatGPT to help in research for a lawsuit could be facing sanctions after it was found the artificial intelligence chatbot made up relevant court decisions to support his client’s case.

Steven A. Schwartz, who used informa…

Two attorneys may face judicial sanctions after citing cases that were made up by ChatGPT.

The attorneys could even be disbarred after using information “hallucinated” by the generative artificial intelligence (AI) tool on two separate occa…

A US attorney is facing charges in a New York court for using fake citations generated by OpenAI’s ChatGPT in legal research for a case he was handling, as first reported by The New York Times. Steven Schwartz (no relation) admitted to usin…

A federal judge in New York City has ordered two lawyers and their law firm to show cause why they shouldn't be sanctioned for submitting a brief with citations to fake cases, thanks to research by ChatGPT.

Senior U.S. District Judge P. Kev…

A lawyer with over 30 years of practice behind him has used ChatGPT for a case -- allegedly for the first time in his career -- and is now the subject of disciplinary proceedings set for hearing this June.

In Mata v. Avianca, Inc., a case c…

While ChatGPT, the well-known AI language model, is undeniably a tremendously fascinating piece of technology, there are significant concerns about its suitability as a tool and source for trustworthy and accurate information and references…

Few lawyers would be foolish enough to let an AI make their arguments, but one already did, and Judge Brantley Starr is taking steps to ensure that debacle isn’t repeated in his courtroom.

The Texas federal judge has added a requirement tha…

In an "unprecedented" case, a lawyer who has been licensed in New York for over three decades recently apologized for presenting fake cases in court.

His source? The infamous ChatGPT.

Steven Schwartz, an associate in the New York office of …

The credibility of ChatGPT, an AI chatbot developed by OpenAI, has been called into question after it deceived a lawyer into believing that citations provided by the chatbot were legitimate, when in fact they were fabricated. Lawyer Steven …

When the ChatGPT bot was launched last year, law professors warned it could soon take over large parts of the legal profession and start drafting briefs.

Now a lawyer who used it to carry out research has had to apologise to a judge after c…

A lawyer from New York is now facing a court hearing because his law firm utilized the AI tool ChatGPT for conducting legal research.

The judge overseeing the case expressed that the court was confronted with an 'unprecedented circumstance'…

“Here’s What Happens When Your Lawyer Uses ChatGPT,” blasted the New York Times headline to the delight of tech skeptic lawyers everywhere. A seemingly quite irate Judge Kevin Castel of the Southern District of New York issued a show cause …

A district judge in Texas released an order on Tuesday that banned the usage of generative artificial intelligence to write court filings without a human fact-check as the technology becomes more common in legal settings despite its well-do…

/cloudfront-us-east-2.images.arcpublishing.com/reuters/OS6B77CYHRI2BHVQ6EPA425UEE.jpg)

A New York lawyer on Thursday asked a Manhattan federal judge not to sanction him after he included made-up case citations generated by an artificial intelligence chatbot in a legal brief.

The lawyer, Steven Schwartz, admitted in May that h…

A New York attorney who used ChatGPT to write a legal brief — citing bogus cases — profusely apologized in court Thursday, becoming emotional as he explained being “duped” by the artificial intelligence chatbot.

Steven Schwartz, of Tribeca …

As the court hearing in Manhattan began, the lawyer, Steven A. Schwartz, appeared nervously upbeat, grinning while talking with his legal team. Nearly two hours later, Mr. Schwartz sat slumped, his shoulders drooping and his head rising bar…

A Manhattan judge on Thursday imposed a $5,000 fine on two lawyers who prepared a legal brief full of made-up cases and citations, all generated by the artificial intelligence program ChatGPT, and then submitted it in court.

The judge, P. K…

A federal judge on Thursday imposed $5,000 fines on two lawyers and a law firm in an unprecedented instance in which ChatGPT was blamed for their submission of fictitious legal research in an aviation injury claim.

Judge P. Kevin Castel sai…

A federal judge tossed a lawsuit and issued a $5,000 fine to the plaintiff's lawyers after they used ChatGPT to research court filings that cited six fake cases invented by the artificial intelligence tool made by OpenAI.

Lawyers Steven Sch…

/cdn.vox-cdn.com/uploads/chorus_asset/file/24390406/STK149_AI_03.jpg)

/cloudfront-us-east-2.images.arcpublishing.com/reuters/ZGSQRA7Y2JNLBOEYBWCKD3FTZM.jpg)

:quality(70)/cloudfront-us-east-1.images.arcpublishing.com/cmg/AC4F3WFS6FCTDKYJR7BGIEDID4.jpg)

/cloudfront-us-east-2.images.arcpublishing.com/reuters/OS6B77CYHRI2BHVQ6EPA425UEE.jpg)