Associated Incidents

The Ministry of Justice and the Ministry of Science and ICT (Ministry of Science and Technology) are promoting a project to build an 'artificial intelligence identification tracking system' using facial photos of Koreans and foreigners, and the civil society community has condemned it as an "unprecedented violation of human rights in information." People's Solidarity for Participatory Democracy and Lawyers for a Democratic Society (Minbyun) urged an 'immediate stop' of the project and demanded a meeting with the Minister of Justice.

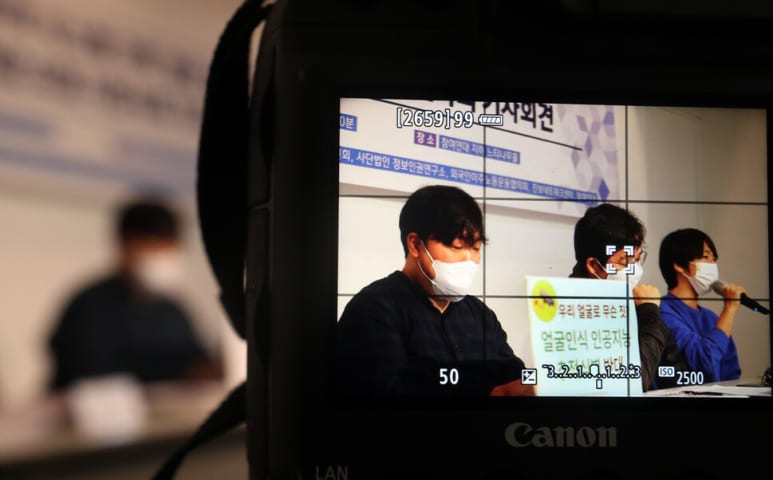

On the 9th, six civic groups, including the People's Solidarity for Participatory Democracy Public Interest Law Center, the Minbyun Digital Information Committee, and the Information Human Rights Research Institute, held a press conference at the office of the People's Solidarity for Participatory Democracy in Jongno-gu, Seoul, and said, "A project to build an artificial intelligence identification tracking system that violates the Personal Information Protection Act and international human rights norms. should be stopped immediately,” he said. The artificial intelligence identification tracking system project is a project that the Ministry of Justice and the Ministry of Science and Technology have been promoting since 2019 under the name of introducing an artificial intelligence facial recognition system to airport immigration management. During this process, the fact that the government transferred more than 170 million domestic and foreign face photos to private companies for AI learning [as reported on the 21st of last month](https://www.hani.co.kr/ arti/economy/it/1016022.html) As it became known, controversy arose over the violation of privacy rights. Civic groups defined the project as a 'shocking human rights disaster'. In a press conference on the same day, the groups said, “Biometric information such as face is unique information that does not change easily, so (if leaked) privacy infringement is fatal.” It was an unprecedented incident in which biometric information was provided to a private company for technology development.”

Points were also raised about the 'illegal nature' of the project. The Ministry of Justice and the Ministry of Science and Technology are arguing that there is no problem in using the photos of the faces used in this project to 'improve immigration procedures' as they were collected for the purpose of 'identification of entrants'. In response, civic groups said, "The Immigration Control Act only allows face information to be provided only for the identification process at the time of entry, and does not allow private companies to process it for artificial intelligence development." They argued that they violated the Personal Information Protection Act, which prohibited personal information, and did not comply with the legal requirements required for the processing of biometric information, which is sensitive information (amongst personal information).” He sent an official letter to the Ministry of Justice requesting a meeting with the minister. Earlier, in a parliamentary audit by the Ministry of Justice last month, Minister Park said, “We will proceed with the project within the minimum scope so that personal information is not abused,” but did not reveal his intention to withdraw the project. In response, civic groups said in an official document, "Recently, the United States and the European Union regard face recognition artificial intelligence as a dangerous technology and are preparing regulatory measures for remote monitoring systems using biometric information." We want to hear responsible answers, such as discontinuing the tracking system business and preparing follow-up measures."