Entities

View all entitiesIncident Stats

Risk Subdomain

1.1. Unfair discrimination and misrepresentation

Risk Domain

- Discrimination and Toxicity

Entity

AI

Timing

Post-deployment

Intent

Unintentional

Incident Reports

Reports Timeline

The high-profile case of a Black man wrongly arrested earlier this year wasn’t the first misidentification linked to controversial facial recognition technology used by Detroit Police, the Free Press has learned.

Last year, a 25-year-old D…

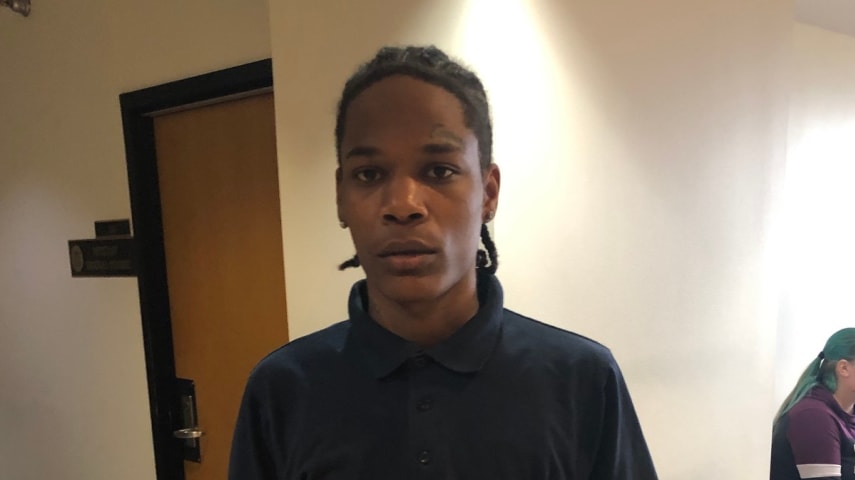

Detroit police wrongfully arrested another Black man based on flawed facial recognition technology that often yields errors in identifying people of color, according to a new lawsuit obtained by Motherboard.

Michael Oliver, 26, was arrested…

In July of 2019, Michael Oliver, 26, was on his way to work in Ferndale, Michigan, when a cop car pulled him over. The officer informed him that there was a felony warrant out for his arrest.

"I thought he was joking because he was laughin…