Entities

View all entitiesGMF Taxonomy Classifications

Taxonomy DetailsKnown AI Goal Snippets

(Snippet Text: GitHub Copilot draws context from the code you’re working on, suggesting whole lines or entire functions., Related Classifications: Code Generation)

Known AI Goal Classification Discussion

Code Generation: Classifying as‘potential’, since it is heavily contested in the article.

Risk Subdomain

2.1. Compromise of privacy by obtaining, leaking or correctly inferring sensitive information

Risk Domain

- Privacy & Security

Entity

AI

Timing

Post-deployment

Intent

Unintentional

Incident Reports

Reports Timeline

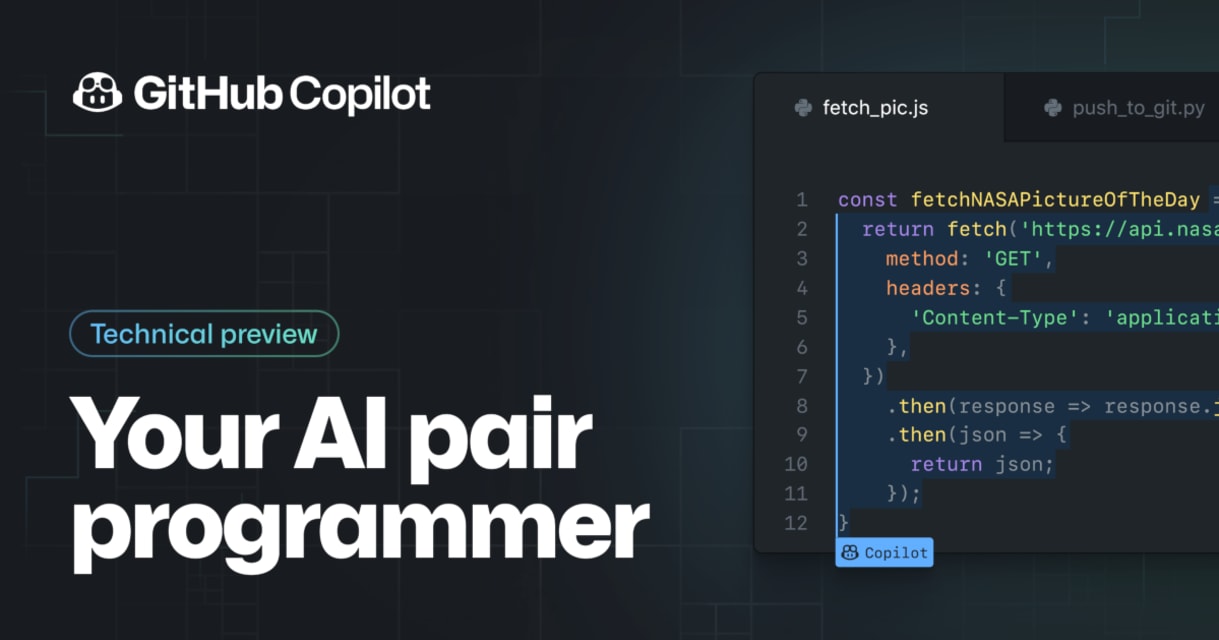

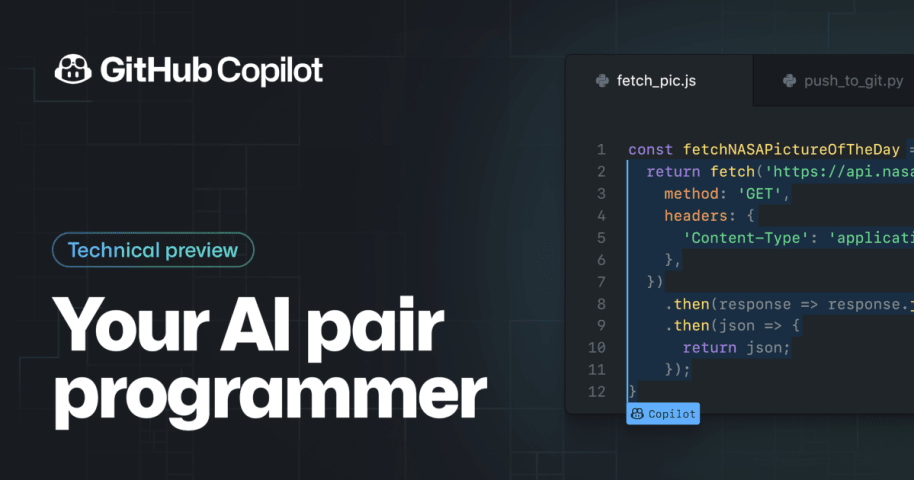

Today, we're launching a technical preview of GitHub Copilot, a new AI pair programmer that helps you write better code. GitHub Copilot draws context from the code you’re working on, suggesting whole lines or entire functions. It helps you …

AIID Editor note: The tweet shows a video of the GitHub Copilot model incrementally producing many lines of code found in an open source licensed work.

Tweet: I don't want to say anything but that's not the right license Mr Copilot.

Earlier this week, GitHub introduced GitHub Copilot, a new feature that it is referring to as “your AI pair programmer” but might also be appropriately called “IntelliSense on steroids.” Built using OpenAI Codex, a new system that the compa…

The software engineering world has been buzzing in recent days following the release of GitHub Copilot — a machine learning-based programming assistant. Copilot aims to help developers work faster and more efficiently by auto-suggesting lin…

We’ve filed a lawsuit challenging GitHub Copilot, an AI product that relies on unprecedented open-source software piracy. Because AI needs to be fair & ethical for everyone.

Hello. This is Matthew Butterick. On October 17 I told you that I …