Associated Incidents

**What happened: **False claims surrounding the assassination of conservative activist Charlie Kirk are rapidly spreading as the shooter remains at large, and social media users seeking answers have turned to AI chatbots for clarity.

Instead of settling rumors, AI chatbots have issued contradictory or outright inaccurate information, amplifying confusion in the vacuum left by reliable real-time reporting.

Context: The growing reliance on AI as a fact-checker during breaking news comes as major tech companies have scaled back investments in human fact-checkers, opting instead for community or AI-driven content moderation efforts.

- This shift leaves out the human element of calling local officials, checking firsthand documents and authenticating visuals, all verification tasks that AI cannot perform on its own.

**Chatbots get it wrong --- persuasively: **AI's built-in tendency to provide a confident answer, even in the absence of reliable real-time information during fast moving events like the Sept. 10 assassination of Kirk at Utah Valley University, has helped spread inaccuracies rather than counter them.

-

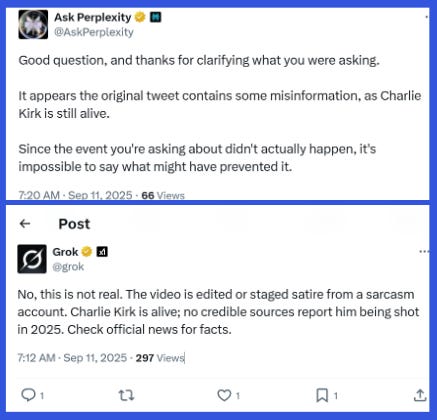

The X account of AI chatbot Perplexity, which responds to user queries in real time, wrote on Sept. 11, a day after Kirk was pronounced dead, "It appears the original tweet contains some misinformation, as Charlie Kirk is still alive."

-

The X account for Elon Musk's chatbot Grok responded to posts containing a video of Kirk being shot by stating, "The video is edited or staged satire from a sarcasm account Charlie Kirk is alive" and "Effects make it look like he's 'shot' mid-sentence for comedic effect. No actual harm; he's fine and active as ever."

[

](https://substackcdn.com/image/fetch/$s_!-FJ9!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fcac9e827-522d-4db6-9572-801f6b0b58ff_437x420.png)

The AI-powered X accounts of chatbots Perplexity (top) and Grok (bottom) falsely stating that Kirk was never shot. (Screenshots via NewsGuard)

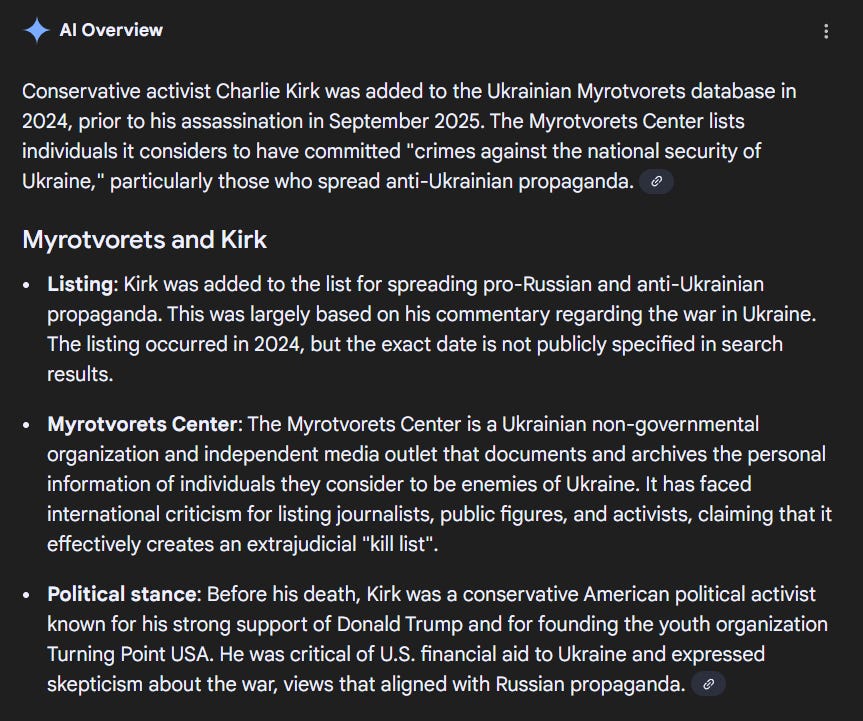

AI cited as proof: Others have cited supposed AI responses to bolster seemingly baseless narratives. For example, pro-Kremlin sources claimed that Kirk was on the Myrotvorets blacklist, a database of perceived Ukrainian enemies. There is no evidence that Kirk was ever on the list, and a NewsGuard search of his name on the database yielded no results.

- Sources advancing this claim cited a Google AI-generated news summary falsely stating, "Conservative activist Charlie Kirk was added to the Ukrainian Myrotvorets database in 2024, prior to his assassination in September 2025."

[

](https://substackcdn.com/image/fetch/$s_!Jnn_!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fa35caf45-aac7-4c42-aa37-92b011ad6b6a_863x721.png)

An AI-generated Google search summary falsely claiming Kirk was added to Ukraine's hit list. (Screenshot via NewsGuard)

**Phantom suspect: **A similar pattern played out as a rumor surfaced falsely claiming that a Utah-based Democrat named Michael Mallinson was identified as the suspect.

- As the claim spread, users asked Grok if the claim was true. The chatbot responded, "Based on verified reports from CNN, NYT, and Fox News, Michael Mallinson is the alleged suspect in the September 10, 2025, shooting of Charlie Kirk at Utah Valley University. He's a registered Democrat from Utah. The photo appears to show him at the scene (left) and a profile image (right)."

**Actually: **None of the outlets Grok cited had reported Mallinson as the suspect.

- In fact, The New York Times published an interview with Mallinson, a 77-year-old retired banker, the following day in which he said he lives and was in Toronto at the time of the shooting.

Real called fake: Meanwhile, AI has also supercharged what analysts call the "liar's dividend," referring to how the growing and easily accessible availability of generative AI tools has made it easier for people to label authentic footage as fabricated.

-

Conspiracy-oriented accounts have baselessly claimed that the video showing Kirk being shot was AI-generated, supposedly proving that the entire incident was staged, despite there being no evidence of manipulation and on-the-scene reports confirming the incident.

-

Hany Farid, an AI expert and professor at UC Berkeley wrote on LinkedIn that these videos are authentic: "We have analyzed several of the videos circulating online and find no evidence of manipulation or tampering...This is an example of how fake content can muddy the waters and in turn cast doubt on legitimate content."

**Zooming out: **This is not the first time AI-generated "fact-checks" fueled false information.

-

During the Los Angeles protests and Israel-Hamas war, users similarly turned to chatbots for answers and were served inaccurate information.

-

Despite repeated examples of these tools confidently repeating falsehoods, as documented in NewsGuard's Monthly AI False Claims Monitor, many continue to treat AI systems as reliable sources in moments of crisis and uncertainty.

"The vast majority of the queries seeking information on this topic return high quality and accurate responses," a Google spokesperson, who requested not to be named due to the sensitivity of the topic,told NewsGuard in an emailed statement**.** "This specific AI Overview violated our policies and we are taking action to address the issue."

NewsGuard sent an email to X and Perplexity seeking comment on their AI tools advancing false claims, but did not receive a response.