Report 3230

In early June, the Japanese government released some of the world’s first legal guidelines around generative artificial intelligence imagery, including tools like Midjourney and Stable Diffusion. But the guidelines also divided those in Japan — and ignited plenty of confusion overseas — over what they actually mean, for anyone from big names like Netflix to everyday manga artists and animators.

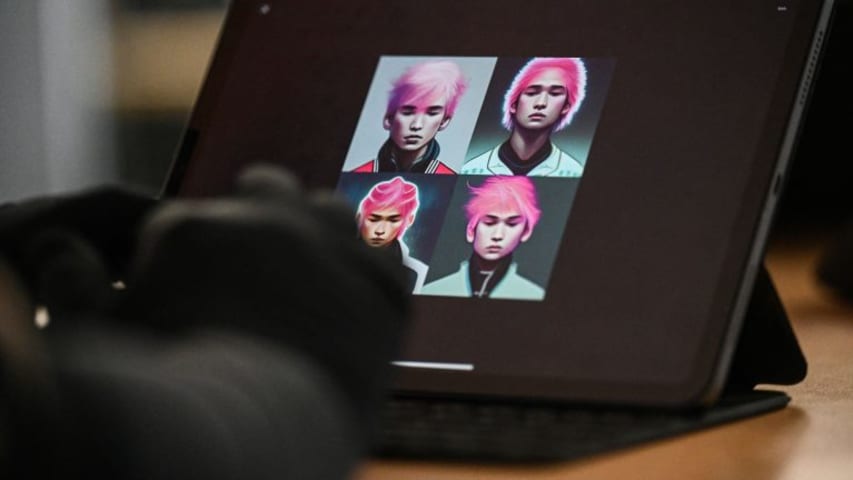

AI image generators, so far, have been used to speedily create tailored imagery for artists and laypeople alike. Questions around copyright hang over the products that they create, since they’re drawn from vast databases that include copyrighted material — and can be used to imitate other people’s work with eerie accuracy. It’s this particular question that Japan’s Agency for Cultural Affairs tried to answer in an hour-long seminar with a 64-page slide deck, using existing copyright law and applying it to the murky realm of generative AI.

Following the seminar, a one-page summary was quickly circulated on social media by artists and industry watchers, to wildly varied reactions. Media and niche industry blogs picked up the news, with one major newspaper’s editorial declaring that the copyright protections didn’t go far enough. One tweet that drew over 41,000 likes took away the opposite conclusion: that it was a step towards regulation, providing protection for artists from “grifters salivating to use Japan as a haven for theft and laziness.” On Hacker News, a 402-comment thread fiercely debated the validity of how the law related to AI developers.

Japanese Prime Minister Fumio Kishida weighed in days later, somewhat equivocally: Japan would continue forming AI policy this year, aiming at “responsible” and “trustworthy AI.”

Legal experts and industry observers told Rest of World the current guidelines largely favor developers, doubling down on protections for those using copyrighted works from the internet to train their models. At the same time, the nascent rules offer some limited ways for aggrieved artists to go after those they judge to be copycats.

Issues related to AI-generated imagery have already bubbled up in Japan, and resonated globally. In January, Netflix released a short anime film which used background illustrations created with the help of an AI image generator, developed by the Japanese startup Rinna. The release caused a global uproar, with critics saying AI was taking work from already underpaid and overworked animators. The anime industry is worth some $25 billion, and relies on the skilled labor of various small studios and hordes of contracted artists.

Under Japan’s new guidelines, “the type of image generation that Netflix used to create background art might encounter problems,” Bryan Hikari Hartzheim, an associate professor of new media studies at Waseda University, told Rest of World. “If a company isn’t careful, artists can sue or demand compensation if their work is used to create parts of commercial products.”

Japan’s new guidelines also sketch out pathways to protect artists’ work, but the legalities can quickly get dense. An aggrieved artist will have to prove that a commercially sold, AI-generated piece of art has “similarity” with their own work.

“If a company isn’t careful, artists can sue or demand compensation if their work is used to create parts of commercial products.”

They can show the work’s “reliance on an existing image,” according to Taichi Kakinuma, an AI-focused partner at the law firm Storia. The fastest way to prove this is with evidence that the work was used as training data. But since training data sets are not publicly disclosed, they may have no knowledge of its use, let alone be able to secure evidence.

“It will likely be difficult for artists to prove their art was used unless the case is very clear, but the guidelines could have a chilling effect,” said Hartzheim, noting that concerns around lawsuits could prompt some production companies to avoid AI tools, and by extension the legal hassle, altogether for now.

According to the Cultural Agency’s documents, AI developers in Japan benefit from a 2018 revision of the national copyright law that explicitly allows machine-learning engineers to train models on copyrighted works. The law considers scraping copyrighted works to be merely “information analysis” or “learning,” and far from infringement. Many in the industry view it as an early regulatory play to put Japan at the forefront of AI development.

“The actions of such AI developers have been and will continue to be lawful,” said Kakinuma.

This revision is key for any generative AI companies based in Japan. Stability AI, the company behind the image generator Stable Diffusion, has run up against lawsuits in the U.S. from the likes of Getty Images after it allegedly used as many as 12 million images without permission or compensation. In Japan, where Stability AI has opened a quickly expanding Tokyo office, its operations would be sheltered from such legal pressures. The same applies for domestic generative AI developers like Rinna.

“Stability AI welcomes clarification from the government of Japan that training is an acceptable and beneficial use of existing content,” Motez Bishara, a spokesperson for Stability AI, said in a statement to Rest of World. Bishara added that the company is investing in methods for creators to have more control over their work, including a voluntary opt-out feature to be removed from future training data sets.

The AI guidelines are just that: guidelines. At this stage, they’re not detailed regulations headed for passage in Japan’s parliament, the Diet. But they are the Japanese government’s official stance on interpreting how tools like Midjourney and Stability AI can be used without infringing on copyright law, and indicate the direction that policy may move in the future.

Even within the parliament, though, there are dissenting voices. “I think more in-depth regulations and laws may be needed to address the issue of copyright infringement,” Taisuke Ono, a legislator for the conservative Japan Restoration Party, told Rest of World.

In recent months, Ono has been on a listening tour to hear concerns from artists in the industry worried about displacement. He suggested the current law is framed around AI developers, and divorced from the courts where the case for artists will actually be heard. “Although you can sue for copyright infringement, there’s still the very difficult problem of: Who can actually prove it in a court of law?”