Associated Incidents

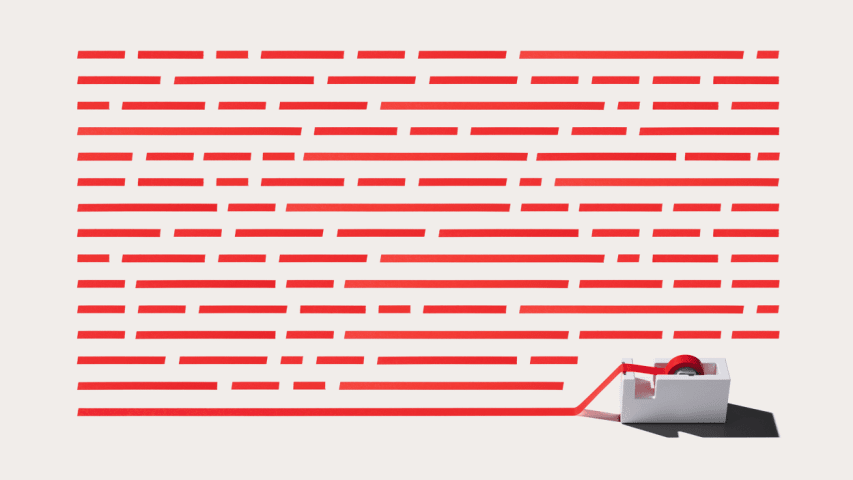

If you've noticed the use of newly invented words cropping up on digital platforms or words used out of context or misspelled, it's not a new kind of social media slang—it's algospeak.

Terms like "unalive" take the place of "dead" or "kill." Sex becomes "seggs." The LGBTQ community suddenly goes by "leg booty." Adult entertainers assume new identities as "accounts" who dabble in "corn."

Algospeak (a combo of "algorithm" and "speak") is a way to talk about hot button issues or potentially controversial topics without the content getting flagged or removed. It's something marginalized creators have glommed onto, as their content is disproportionately targeted by automated content-moderation filters.

In a recent episode of Fast Company's creator-economy podcast Creative Control, I spoke with Brooke Duffy, an associate professor at Cornell University, who published a paper in July digging into how algorithms on social media platforms can render the accounts of marginalized creators practically invisible. She found more and more creators were using algospeak to ensure their "content gets seen by audiences without getting punished by content moderation, which are highly suspect and difficult to discern." If you haven't already, check out this episode of Creative Control for a deeper dive into how marginalized creators are landing in the crosshairs of moderation.

For this week's episode, I wanted to kind of dissect the concept of algospeak a bit more. Specifically, what does it mean that creators even have to warp language like this? And at what point does algospeak become so ubiquitous that it defeats its own purpose?

To help me answer these questions, I reached out to Sean Szolek-Van Valkenburgh, a social media manager and content creator who breaks down the terms of service on social platforms; and Casey Fiesler, a professor at the University of Colorado Boulder who researches technology ethics and internet law.