Description: Meta's AI image generator is alleged to produce inaccurate and biased images, consistently failing to depict interracial relationships involving Asian individuals and Caucasian or Black individuals. Instead, it generates images featuring two Asian people or stereotypes, erasing the diversity and representation of Asian people.

Outils

Nouveau rapportNouvelle RéponseDécouvrirVoir l'historique

Le Moniteur des incidents et risques liés à l'IA de l'OCDE (AIM) collecte et classe automatiquement les incidents et risques liés à l'IA en temps réel à partir de sources d'information réputées dans le monde entier.

Entités

Voir toutes les entitésPrésumé : Un système d'IA développé et mis en œuvre par Meta, a endommagé Asian People , Interracial couples et General public.

Statistiques d'incidents

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

1.1. Unfair discrimination and misrepresentation

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Discrimination and Toxicity

Entity

Which, if any, entity is presented as the main cause of the risk

AI

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Unintentional

Rapports d'incidents

Chronologie du rapport

Loading...

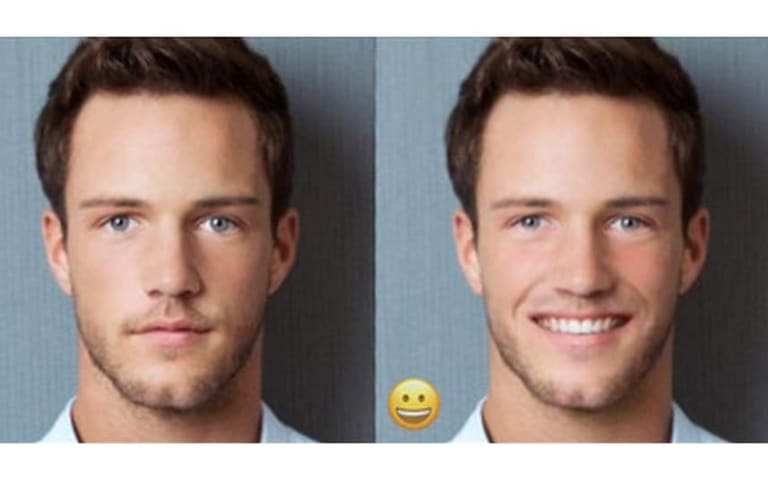

Have you ever seen an Asian person with a white person, whether that’s a mixed-race couple or two friends of different races? Seems pretty common to me — I have lots of white friends!

To Meta’s AI-powered image generator, apparently this is…

Loading...

Réponse post-incident de Mia Sato

Yesterday, I reported that Meta's AI image generator was making everyone Asian, even when the text prompt specified another race. Today, I briefly had the opposite problem: I was unable to generate any Asian people using the same prompts as…

Variantes

Une "Variante" est un incident de l'IA similaire à un cas connu—il a les mêmes causes, les mêmes dommages et le même système intelligent. Plutôt que de l'énumérer séparément, nous l'incluons sous le premier incident signalé. Contrairement aux autres incidents, les variantes n'ont pas besoin d'avoir été signalées en dehors de la base de données des incidents. En savoir plus sur le document de recherche.

Vous avez vu quelque chose de similaire ?

Incidents similaires

Did our AI mess up? Flag the unrelated incidents

Loading...

Biased Google Image Results

· 18 rapports

Loading...

Gender Biases of Google Image Search

· 10 rapports

Loading...

FaceApp Racial Filters

· 23 rapports

Incidents similaires

Did our AI mess up? Flag the unrelated incidents

Loading...

Biased Google Image Results

· 18 rapports

Loading...

Gender Biases of Google Image Search

· 10 rapports

Loading...

FaceApp Racial Filters

· 23 rapports