Incident 79: Une méthode de test rénal aurait sous-estimé le risque chez les patients noirs

Entités

Voir toutes les entitésClassifications de taxonomie CSETv0

Détails de la taxonomieProblem Nature

Unknown/unclear

Physical System

Software only

Level of Autonomy

Low

Nature of End User

Amateur

Public Sector Deployment

No

Data Inputs

creatinine levels, age, sex, race

Classifications de taxonomie CSETv1

Détails de la taxonomieIncident Number

79

AI Tangible Harm Level Notes

There is no AI. The harm comes from a formula that uses race as a factor.

Notes (special interest intangible harm)

4.1 - Black patients overlooked by the calculation because of built-in points had their access to critical public healthcare reduced.

Special Interest Intangible Harm

yes

Risk Subdomain

1.1. Unfair discrimination and misrepresentation

Risk Domain

- Discrimination and Toxicity

Entity

AI

Timing

Post-deployment

Intent

Unintentional

Rapports d'incidents

Chronologie du rapport

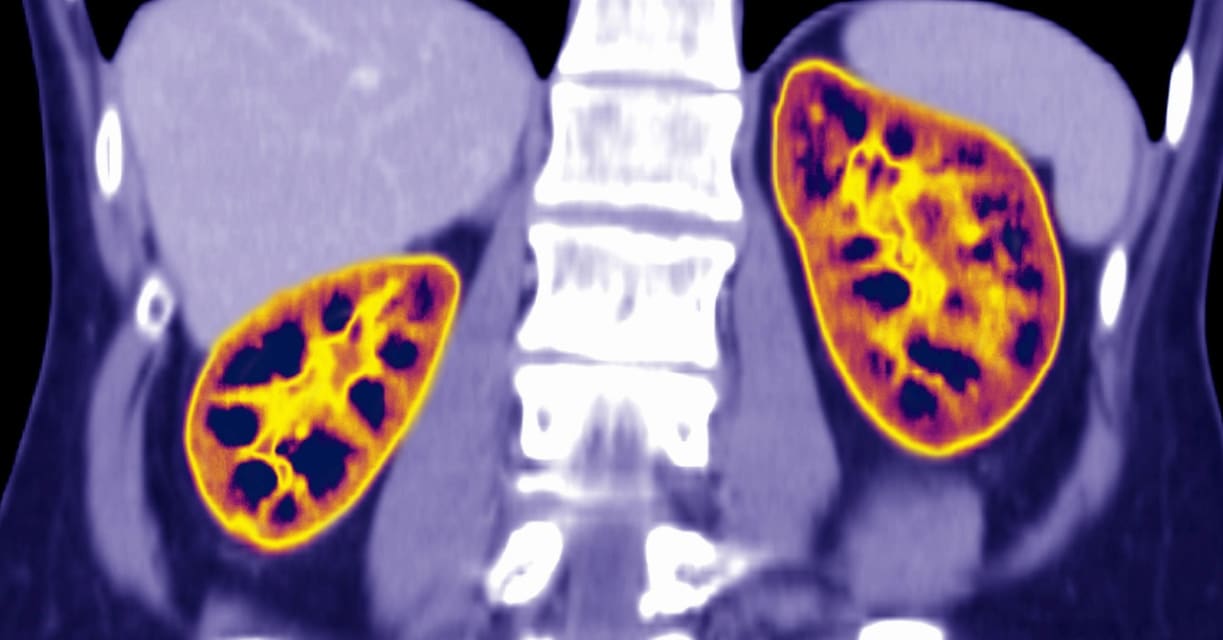

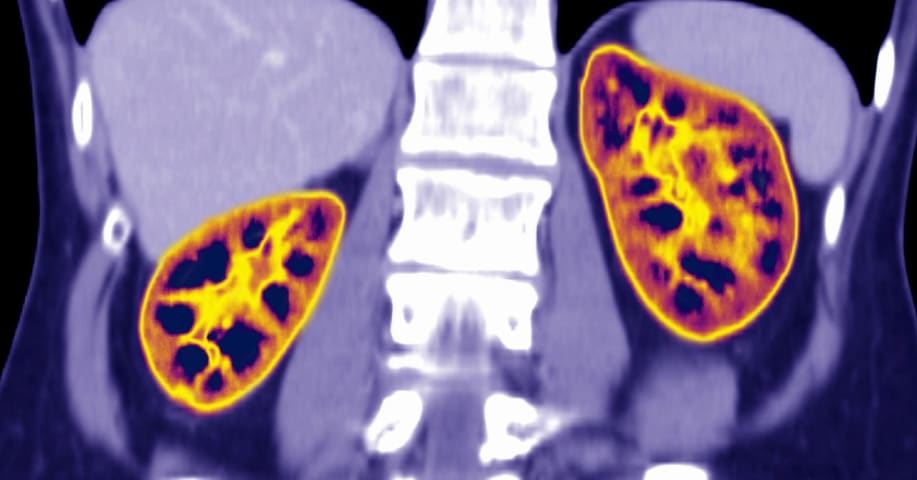

Pendant des années, les médecins et les étudiants en médecine, dont beaucoup sont noirs, ont averti que le test rénal le plus largement utilisé – dont les résultats sont basés sur la race – est raciste et dangereusement inexact. Leurs appel…

CONTEXTE : Pour faire progresser l'équité en santé, il faut réduire les disparités dans les soins. Les patients afro-américains atteints d'insuffisance rénale chronique (IRC) ont de moins bons résultats, y compris le placement de l'accès à …

LES NOIRS AUX ÉTATS-UNIS souffrent davantage de maladies chroniques et reçoivent des soins de santé inférieurs à ceux des Blancs. Les mathématiques faussées racialement peuvent aggraver le problème.

Les médecins prennent souvent des décisio…

Variantes

Incidents similaires

Did our AI mess up? Flag the unrelated incidents

Incidents similaires

Did our AI mess up? Flag the unrelated incidents