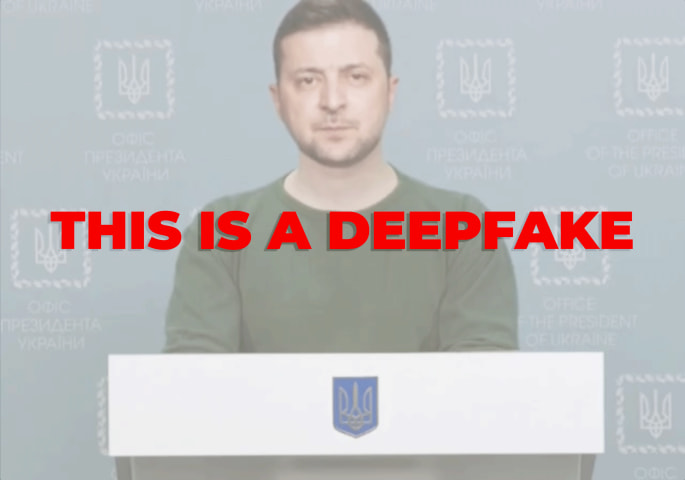

Incidente 82: #LekkiMassacre: ¿Por qué Facebook calificó de "falso" el contenido del incidente del 20 de octubre?

Descripción: Facebook etiqueta incorrectamente como desinformación el contenido relacionado con un incidente entre los manifestantes de #EndSARS y el ejército nigeriano.

Entidades

Ver todas las entidadesPresunto: un sistema de IA desarrollado e implementado por Facebook, perjudicó a Facebook users y Facebook users interested in the Lekki Massacre incident.

Clasificaciones de la Taxonomía CSETv1

Detalles de la TaxonomíaIncident Number

The number of the incident in the AI Incident Database.

82

Clasificaciones de la Taxonomía CSETv0

Detalles de la TaxonomíaProblem Nature

Indicates which, if any, of the following types of AI failure describe the incident: "Specification," i.e. the system's behavior did not align with the true intentions of its designer, operator, etc; "Robustness," i.e. the system operated unsafely because of features or changes in its environment, or in the inputs the system received; "Assurance," i.e. the system could not be adequately monitored or controlled during operation.

Specification

Physical System

Where relevant, indicates whether the AI system(s) was embedded into or tightly associated with specific types of hardware.

Software only

Level of Autonomy

The degree to which the AI system(s) functions independently from human intervention. "High" means there is no human involved in the system action execution; "Medium" means the system generates a decision and a human oversees the resulting action; "low" means the system generates decision-support output and a human makes a decision and executes an action.

Medium

Nature of End User

"Expert" if users with special training or technical expertise were the ones meant to benefit from the AI system(s)’ operation; "Amateur" if the AI systems were primarily meant to benefit the general public or untrained users.

Amateur

Public Sector Deployment

"Yes" if the AI system(s) involved in the accident were being used by the public sector or for the administration of public goods (for example, public transportation). "No" if the system(s) were being used in the private sector or for commercial purposes (for example, a ride-sharing company), on the other.

No

Data Inputs

A brief description of the data that the AI system(s) used or were trained on.

user content (textposts, images, videos)

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

3.1. False or misleading information

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Misinformation

Entity

Which, if any, entity is presented as the main cause of the risk

AI

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Unintentional

Informes del Incidente

Cronología de Informes

Loading...

El miércoles 21 de octubre de 2020, varios contenidos que contenían imágenes relacionadas con el desafortunado incidente ocurrido el martes en la puerta de peaje de Lekki en Lagos, Nigeria, se marcaron como información errónea en Facebook e…

Variantes

Una "Variante" es un incidente de IA similar a un caso conocido—tiene los mismos causantes, daños y sistema de IA. En lugar de enumerarlo por separado, lo agrupamos bajo el primer incidente informado. A diferencia de otros incidentes, las variantes no necesitan haber sido informadas fuera de la AIID. Obtenga más información del trabajo de investigación.

¿Has visto algo similar?

Incidentes Similares

Did our AI mess up? Flag the unrelated incidents

Incidentes Similares

Did our AI mess up? Flag the unrelated incidents