Incidente 603: La asignación algorítmica de recursos en la atención sanitaria para personas con discapacidad y personas mayores presuntamente perjudica a los pacientes

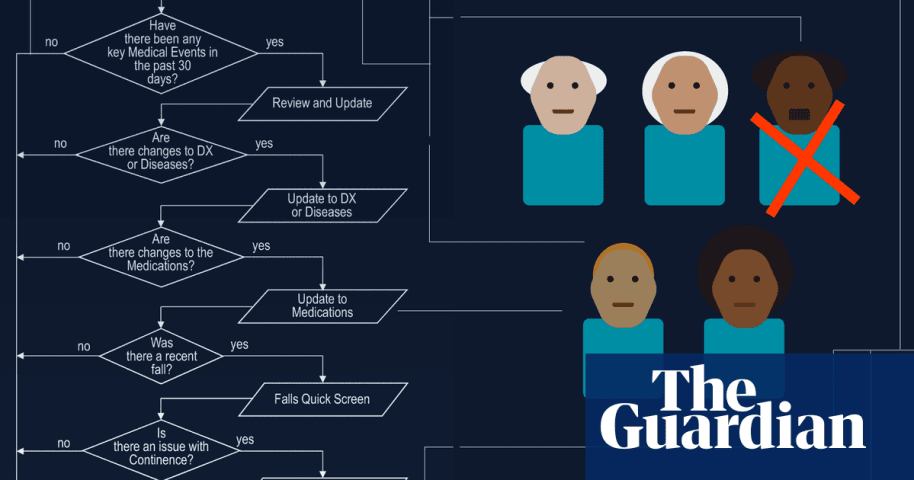

Descripción: Un algoritmo de atención médica diseñado para distribuir equitativamente los recursos de cuidado redujo drásticamente las horas de atención para personas con discapacidad y personas mayores, lo que generó dificultades y perjuicios significativos. Inicialmente desarrollado para una asignación justa de recursos, el sistema finalmente enfrentó desafíos legales por su incapacidad para evaluar con precisión las necesidades individuales, lo que resultó en una reducción de la atención esencial y planteó inquietudes éticas sobre la IA en la toma de decisiones sanitarias.

Entidades

Ver todas las entidadesAlleged: State governments y Brant Fries developed an AI system deployed by State governments , Idaho state government , Arkansas state government , Washington DC government , Pennsylvania state government , Iowa state government y Missouri state government, which harmed Disabled people , Elderly people , Low-income people , Larkin Seiler y Tammy Dobbs.

Clasificaciones de la Taxonomía CSETv1

Detalles de la TaxonomíaIncident Number

The number of the incident in the AI Incident Database.

603

Notes (special interest intangible harm)

Input any notes that may help explain your answers.

4.2 - The algorithm that cut Seiler's care in 2008 was declared unconstitutional by the court in 2016.

Special Interest Intangible Harm

An assessment of whether a special interest intangible harm occurred. This assessment does not consider the context of the intangible harm, if an AI was involved, or if there is characterizable class or subgroup of harmed entities. It is also not assessing if an intangible harm occurred. It is only asking if a special interest intangible harm occurred.

yes

Date of Incident Year

The year in which the incident occurred. If there are multiple harms or occurrences of the incident, list the earliest. If a precise date is unavailable, but the available sources provide a basis for estimating the year, estimate. Otherwise, leave blank.

Enter in the format of YYYY

2008

Date of Incident Month

The month in which the incident occurred. If there are multiple harms or occurrences of the incident, list the earliest. If a precise date is unavailable, but the available sources provide a basis for estimating the month, estimate. Otherwise, leave blank.

Enter in the format of MM

Date of Incident Day

The day on which the incident occurred. If a precise date is unavailable, leave blank.

Enter in the format of DD

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

7.3. Lack of capability or robustness

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- AI system safety, failures, and limitations

Entity

Which, if any, entity is presented as the main cause of the risk

AI

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Intentional

Informes del Incidente

Cronología de Informes

Loading...

Enfrentarse a un algoritmo fue una batalla diferente a cualquier otra que Larkin Seiler haya enfrentado.

Debido a su parálisis cerebral, este hombre de 40 años, que trabaja en una empresa de ingeniería ambiental y le encanta asistir a juego…

Variantes

Una "Variante" es un incidente de IA similar a un caso conocido—tiene los mismos causantes, daños y sistema de IA. En lugar de enumerarlo por separado, lo agrupamos bajo el primer incidente informado. A diferencia de otros incidentes, las variantes no necesitan haber sido informadas fuera de la AIID. Obtenga más información del trabajo de investigación.

¿Has visto algo similar?

Incidentes Similares

Did our AI mess up? Flag the unrelated incidents

Incidentes Similares

Did our AI mess up? Flag the unrelated incidents