インシデントのステータス

Risk Subdomain

1.1. Unfair discrimination and misrepresentation

Risk Domain

- Discrimination and Toxicity

Entity

AI

Timing

Post-deployment

Intent

Unintentional

インシデントレポート

レポートタイムライン

今年後半にカンファレンスで講演します (UX+AI について)。

自分の写真が掲載されたカンファレンスの広告を見たのですが、ちょっと待って、これはおかしいと思いました。

プロフィール写真にブラジャーが見えているのに、今まで気づかなかったのでしょうか...? 変ですね。

元の写真を開いたところ、

ブラジャーは見えませんでした。

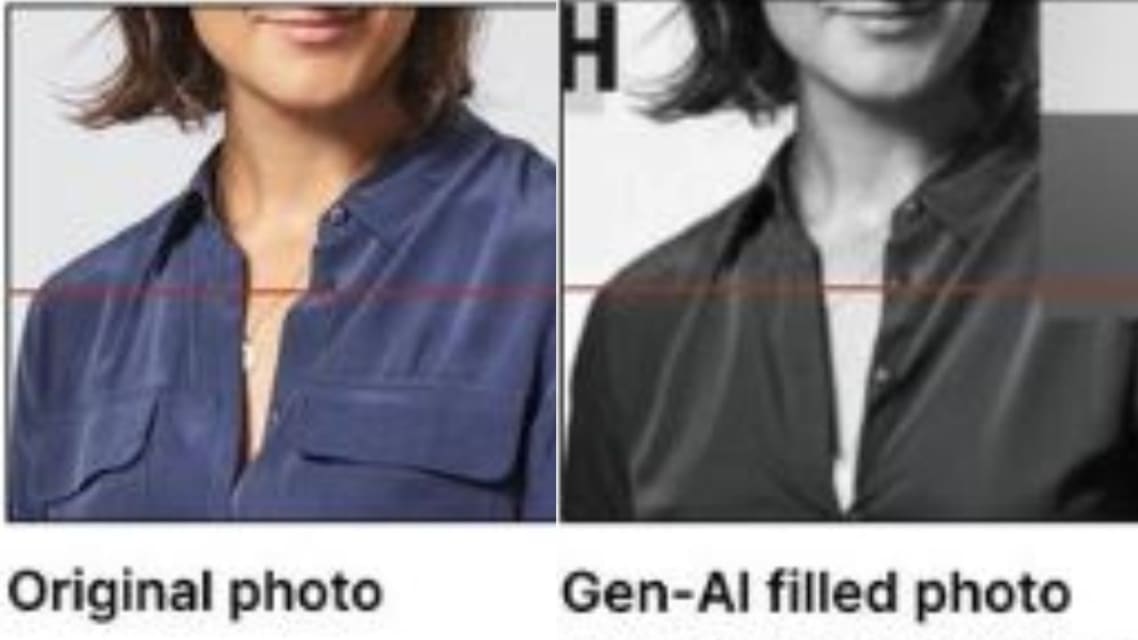

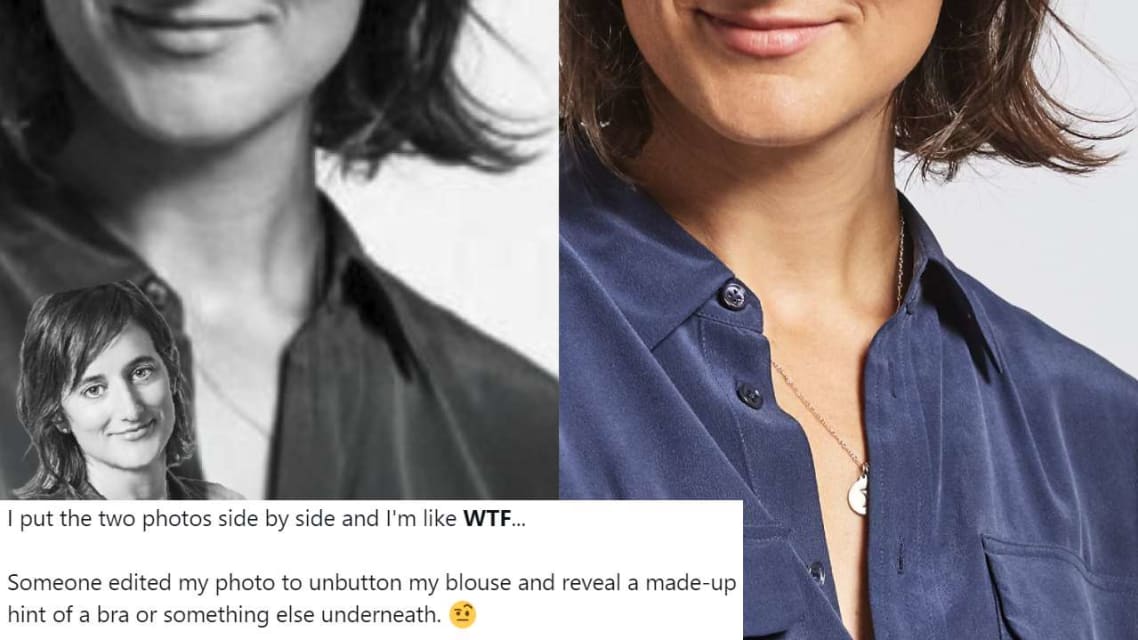

2 枚の写真を並べて、何だって... と思いました。

誰かが私の写真を編集して、ブラウスのボタンを外し、ブラジャーか何かの下に隠したものを露出させようと…

UI/UX デザイナーは、カンファレンスのポスターに使用した自分の写真が主催者によって編集され、写真にブラジャーが追加されたと主張しました。

これまで Google マップ、Facebook、YouTube で働いていた Elizabeth Lazarki さんは、編集された写真を X で共有しました。(X/@elizlaraki)

これまで Google マップ、Facebook、YouTube で働いていた Elizabeth Lazarki さんは、今年後半に UX と…

ワシントン DC: ある女性が X で、奇妙でユニークな体験をネットユーザーと共有しました。彼女は、カンファレンスに提出した画像が AI によってブラジャー付きに改変されたと主張しました。この出来事はネットユーザーを困惑させ、奇妙に感じさせました。また、AI の使用の課題に関する会話も引き起こしました。

元 Google 社員のエリザベス・ララキは、UX と AI デザインに関するカンファレンスで講演することを共有しました。カンファレンスのポスター広告を受け取ったとき、ララキ…

Google マップの開発に携わっていた元 Google 社員のエリザベス ララキ氏は、自身がパネリストを務める AI カンファレンス (Upscale Conf) の広告を初めて見た時、取り乱した。X/Twitter と LinkedIn で、この技術起業家でデザイン界の異端児は、自分の写真にブラジャーの一部が写り込んでしまったという恐ろしい体験を語った。「プロフィール写真にブラジャーが写っているのに、今まで気づかなかったの...? 変だわ。」 技術者は元の写真を開いて「ブ…

Google、YouTube、Facebookで働いていたプロダクトデザイナーが、AIカンファレンスの主催者がポスター用に自分の写真を編集したと非難した。米国在住のこの技術者は、シャツのポケットが取り除かれただけでなく、ブラウスのボタンも外され、ブラジャーが追加されていたと主張した。主催者は現在、すべてのポスターを撤去し、彼女に謝罪した。

エリザベス・ララキは今年後半にUXとAIに関するカンファレンスに参加する予定で、主催者はそのカンファレンスの広告を掲載した。彼女は自分の写…

バリアント

よく似たインシデント

Did our AI mess up? Flag the unrelated incidents

よく似たインシデント

Did our AI mess up? Flag the unrelated incidents