インシデントのステータス

Risk Subdomain

4.1. Disinformation, surveillance, and influence at scale

Risk Domain

- Malicious Actors & Misuse

Entity

AI

Timing

Post-deployment

Intent

Intentional

インシデントレポート

レポートタイムライン

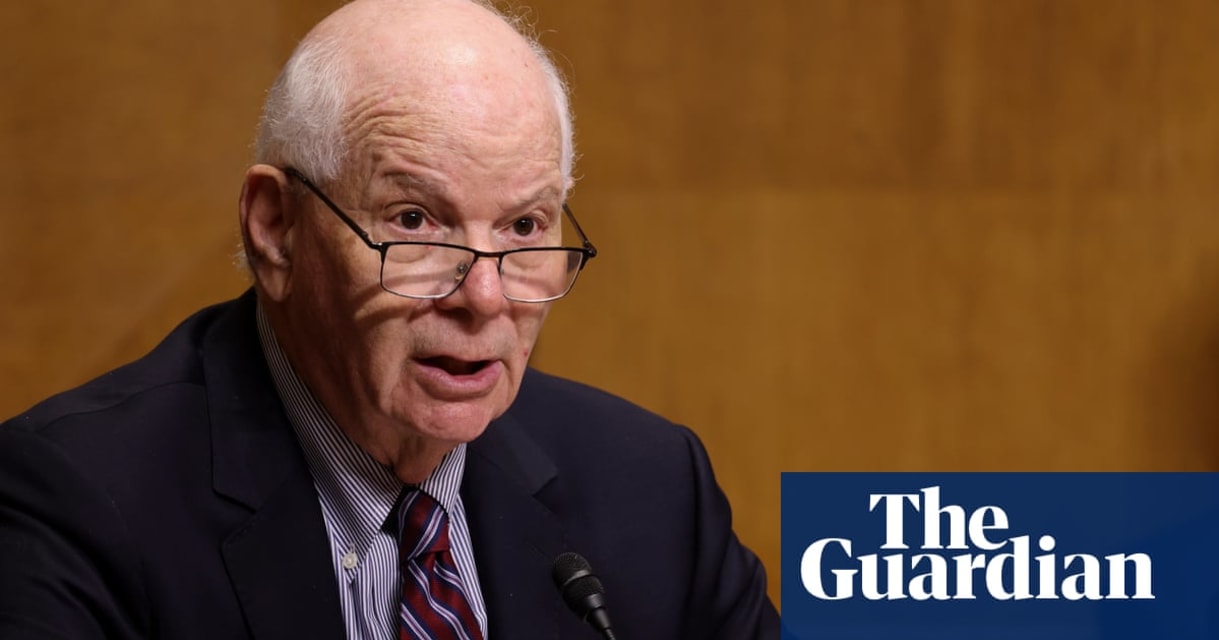

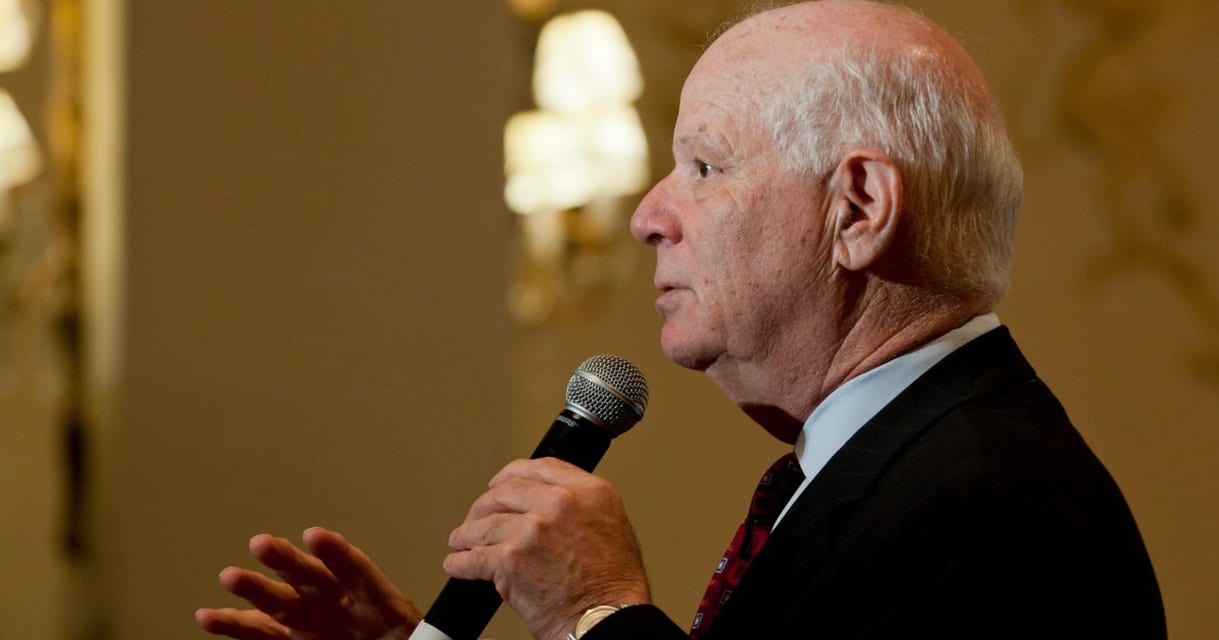

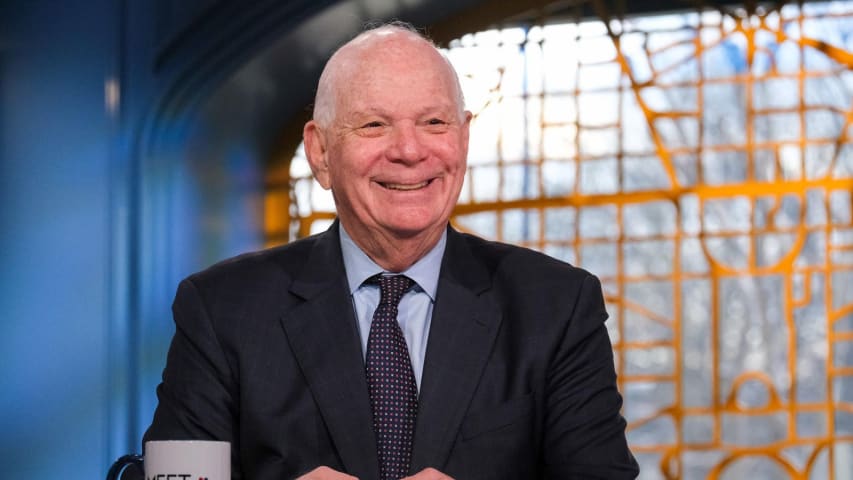

独占:上院外交委員会のベン・カーディン委員長(民主党、メリーランド州選出)は最近、ウクライナ高官になりすました高度なディープフェイク工作の標的になった。この件について説明を受けた3人やパンチボウル・ニュースが確認した通信によると。

月曜日の朝、上院のセキュリティ事務所は、今月初めにビデオ会議プラットフォームZoomで発生したこの事件について、上院の指導部補佐官とさまざまな上院委員会のセキュリティ責任者の選抜グループに警告した。捜査について説明を受けた情報筋によると、FBIがこ…

上院外交委員会の委員長が、おそらく「ディープフェイク」人工知能技術を使ってウクライナ高官を装った「悪意ある人物」とのビデオ通話に誘い込まれたと、議員と議会の補佐官が木曜日に明らかにした。

ベン・カーディン上院議員(民主党、メリーランド州選出)は先週、ウクライナの元外相ドミトロ・クレーバを装った人物からズームで会話をしたいとメールで連絡を受けた。上院議員とのビデオ通話では、その人物の声と容姿はクレーバと一致していたが、その人物が次の選挙に関連した性格に反した質問をしてきたため、…

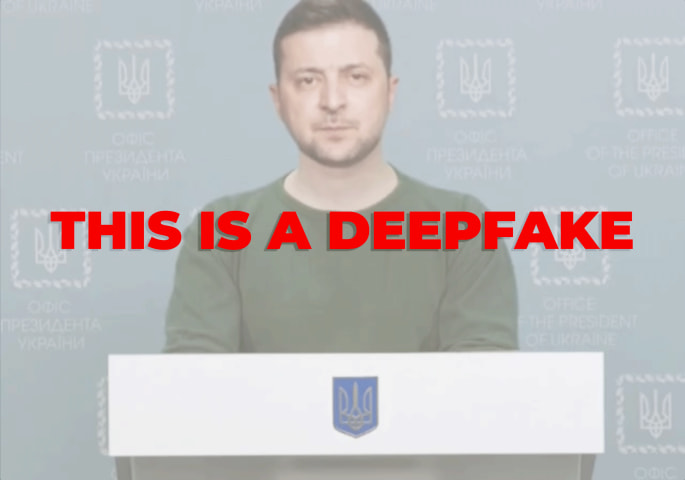

外交委員会の委員長であるベンジャミン・L・カーディン上院議員との最近のビデオ会議で、ある「ディープフェイク」の発信者がウクライナの高官を装い、米国の政治に影響を与えたり機密情報を入手しようとする悪意ある人物の標的になるのではないかという懸念が再燃している。

ニューヨーク・タイムズが入手した上院のセキュリティ担当者が議員事務所に送った電子メールによる警告によると、先週木曜日、ある上院議員事務所は、最近までウクライナの外務大臣だったドミトロ・クレーバ氏からのものとみられる電子メー…

複数の報道によると、上院外交委員会のベン・カーディン委員長(民主党、メリーランド州選出)は、ビデオ通話者がディープフェイク画像を使ってウクライナ高官を装うという高度ななりすまし行為の標的となり、AIが生成した偽の画像や動画の脅威が高まっていることが浮き彫りになった。

この事件は、パンチボウルニュースによって最初に報じられ、後にニューヨークタイムズ確認され、上院のセキュリティオフィスが最近、ウクライナの元外務大臣ドミトロ・クレーバを装った人物とズーム通話した件について送った通知…

ワシントン(AP通信)— 上院安全保障局によると、今月、高度なディープフェイク工作が上院外交委員会の民主党委員長であるベン・カーディン上院議員を標的にしていた。これは、悪意ある行為者が米国のトップ政治家を騙すために人工知能に目を向けていることを示す最新の��兆候だ。

専門家は、かつては生成型人工知能をめぐって存在していた技術的障壁が減少した今、このような計画はより一般的になると考えている。月曜日に上院安全保障局から上院事務所に送られた通知では、この試みは「技術的に洗練されており、…

米当局は、最近退任したウクライナ外相を模倣したディープフェイク「俳優」が、選挙介入の疑いで上院の強力な外交委員会の委員長を標的にしたことを確認した。

メリーランド州の民主党上院議員ベン・カーディン氏は、9月19日に予定されていたズーム通話中に、ドミトロ・クレーバ氏を装う人物と不審に思った。クレーバ氏は今月の政府改造でウクライナのトップ外交官を辞任した。

クレーバ氏とみられる人物は、ビデオ会議を要請する電子メールをカーディン氏の事務所に送っていた。2人は以前にも会っていた。

上…

国家安全保障当局は、メリーランド州選出のベン・カーディン上院議員 へのズーム通話について捜査している。この通話では、誰かがディープフェイク技術を使用して�元ウクライナ高官になりすましていた。

当局は依然として責任者を特定しようとしているが、諜報筋はロシア、中国、イランの関与を疑っている。

ディープフェイク は、偽の有名人の宣伝やユーモア動画によく使用されるが、今回のケースでは笑い事ではない。

議会警察は、このズーム通話が外国高官になりすまそうとしたものだことを確認した。カーディ…

今月初め、上院外交委員会の民主党委員長を務めるベン・カーディン上院議員(メリーランド州民主党)が高度なディープフェイク作戦の標的となり、一部は同議員を騙すことに成功した。

この作戦は、カーディン氏と元ウクライナ外務大臣ディムトロ・クレーバ氏との職業上のつながりを中心に行われた。報道によると、カーディン氏の事務所は、クレーバ氏と思われる人物からメールを受け取ったという。同氏は、カーディン氏が過去の会合で既に面識があった。

上院議員のセキュリティ事務所が出した通知によると、クレー…

メリーランド州選出の民主党上院議員ベン・カーディン氏は最近、ウクライナ高官とのビデオ会議に参加した。唯一の問題は、それがディープフェイクだったことだ。

カーディン氏は、ズームでチャットしたいと思っていたウクライナの�元外相ドミトロ・クレーバ氏と話していると思っていた。しかし、ニューヨーク・タイムズによると、クレーバ氏になりすました人物が政治、次の選挙、外交政策に関するデリケートな問題について質問し始めたとき、カーディン氏は疑念を抱いたという。例えば、カーディン氏はロシアへの長距…

バリアント

よく似たインシデント

Did our AI mess up? Flag the unrelated incidents

よく似たインシデント

Did our AI mess up? Flag the unrelated incidents