CSETv0 分類法のクラス

分類法の詳細Problem Nature

Robustness

Physical System

Software only

Level of Autonomy

Medium

Nature of End User

Amateur

Public Sector Deployment

No

Data Inputs

User posts

CSETv1 分類法のクラス

分類法の詳細Incident Number

84

AI Tangible Harm Level Notes

3.3 - Although there was no tangible harm, the AI was linked to the adverse outcome described in the incident.

Notes (special interest intangible harm)

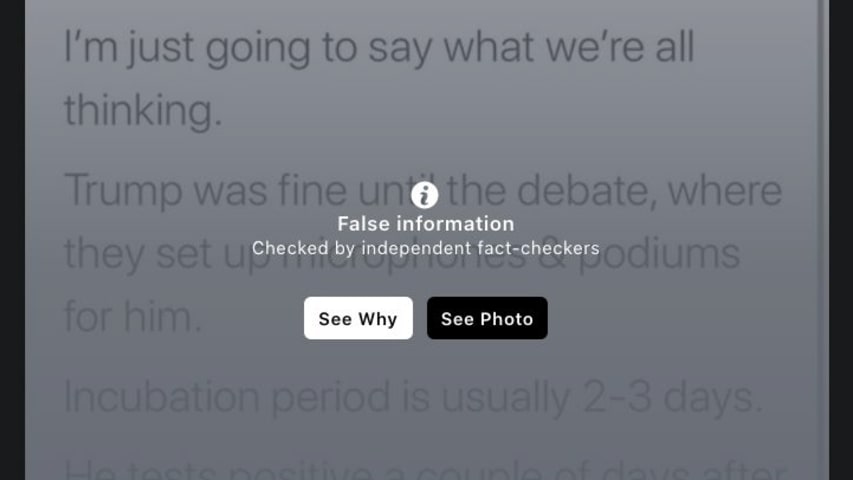

AI failed to prevent the spread of misinformation

Special Interest Intangible Harm

yes

Date of Incident Year

2019

Date of Incident Month

10

Risk Subdomain

3.1. False or misleading information

Risk Domain

- Misinformation

Entity

AI

Timing

Post-deployment

Intent

Unintentional

インシデントレポート

レポートタイムライン

Something as simple as changing the font of a message or cropping an image can be all it takes to bypass Facebook's defenses against hoaxes and lies.

A new analysis by the international advocacy group Avaaz shines light on why, despite the …

バリアント

よく似たインシデント

Did our AI mess up? Flag the unrelated incidents

よく似たインシデント

Did our AI mess up? Flag the unrelated incidents