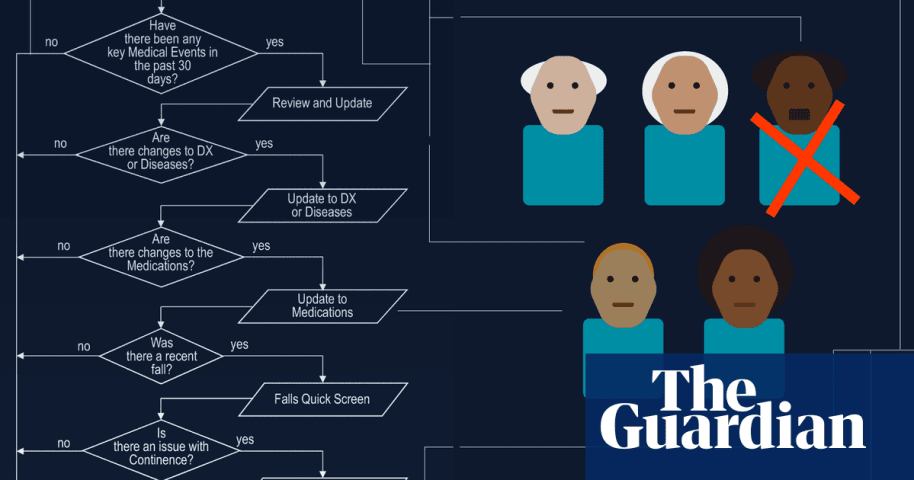

概要: 介護資源を公平に配分するために設計された医療アルゴリズムは、障害者や高齢者の介護時間を大幅に削減し、深刻な困難と被害をもたらしました。当初は公平な資源配分を目的として開発されたこのシステムは、個々のニーズを正確に評価できないという理由で最終的に法的問題に直面しました。その結果、必要なケアが削減され、医療意思決定におけるAIの活用に関する倫理的懸念が高まりました。

Alleged: State governments と Brant Fries developed an AI system deployed by State governments , Idaho state government , Arkansas state government , Washington DC government , Pennsylvania state government , Iowa state government と Missouri state government, which harmed Disabled people , Elderly people , Low-income people , Larkin Seiler と Tammy Dobbs.

CSETv1 分類法のクラス

分類法の詳細Incident Number

The number of the incident in the AI Incident Database.

603

Notes (special interest intangible harm)

Input any notes that may help explain your answers.

4.2 - The algorithm that cut Seiler's care in 2008 was declared unconstitutional by the court in 2016.

Special Interest Intangible Harm

An assessment of whether a special interest intangible harm occurred. This assessment does not consider the context of the intangible harm, if an AI was involved, or if there is characterizable class or subgroup of harmed entities. It is also not assessing if an intangible harm occurred. It is only asking if a special interest intangible harm occurred.

yes

Date of Incident Year

The year in which the incident occurred. If there are multiple harms or occurrences of the incident, list the earliest. If a precise date is unavailable, but the available sources provide a basis for estimating the year, estimate. Otherwise, leave blank.

Enter in the format of YYYY

2008

Date of Incident Month

The month in which the incident occurred. If there are multiple harms or occurrences of the incident, list the earliest. If a precise date is unavailable, but the available sources provide a basis for estimating the month, estimate. Otherwise, leave blank.

Enter in the format of MM

Date of Incident Day

The day on which the incident occurred. If a precise date is unavailable, leave blank.

Enter in the format of DD

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

7.3. Lack of capability or robustness

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- AI system safety, failures, and limitations

Entity

Which, if any, entity is presented as the main cause of the risk

AI

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Intentional

インシデントレポート

レポートタイムライン

Loading...

Going up against an algorithm was a battle unlike any other Larkin Seiler had faced.

Because of his cerebral palsy, the 40-year-old, who works at an environmental engineering firm and loves attending sports games of nearly any type, depends…

バリアント

「バリアント」は既存のAIインシデントと同じ原因要素を共有し、同様な被害を引き起こし、同じ知的システムを含んだインシデントです。バリアントは完全に独立したインシデントとしてインデックスするのではなく、データベースに最初に投稿された同様なインシデントの元にインシデントのバリエーションとして一覧します。インシデントデータベースの他の投稿タイプとは違い、バリアントではインシデントデータベース以外の根拠のレポートは要求されません。詳細についてはこの研究論文を参照してください

似たようなものを見つけましたか?

よく似たインシデント

Did our AI mess up? Flag the unrelated incidents

よく似たインシデント

Did our AI mess up? Flag the unrelated incidents