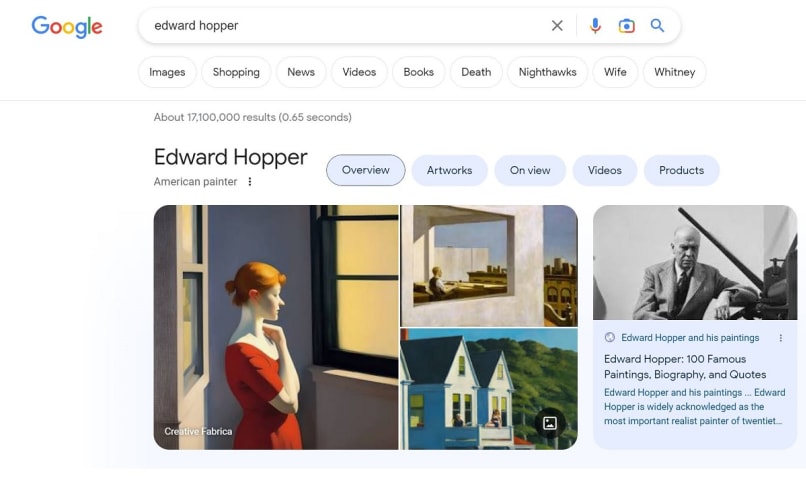

概要: アメリカ人芸術家エドワード・ホッパーに関するグーグルのナレッジパネルには、同芸術家のスタイルで作成されたとされるが、同作品ではないAI生成画像が掲載されており、その画像はその後すぐに削除された。

推定: Googleが開発し提供したAIシステムで、Google usersに影響を与えた

インシデントのステータス

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

3.1. False or misleading information

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Misinformation

Entity

Which, if any, entity is presented as the main cause of the risk

AI

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Unintentional

インシデントレポート

レポートタイムライン

Loading...

If you don't think visual AI is a problem, this is what comes up when you @Google Edward Hopper.

Loading...

AI is already rewriting art history.

When you Googled the famed realist artist Edward Hopper this week, the first featured image that appeared was convincing enough. A lone woman, painted in the artist's signature soft, muted style, stared …

バリアント

「バリアント」は既存のAIインシデントと同じ原因要素を共有し、同様な被害を引き起こし、同じ知的システムを含んだインシデントです。バリアントは完全に独立したインシデントとしてインデックスするのではなく、データベースに最初に投稿された同様なインシデントの元にインシデントのバリエーションとして一覧します。インシデントデータベースの他の投稿タイプとは違い、バリアントではインシデントデータベース以外の根拠のレポートは要求されません。詳細についてはこの研究論文を参照してください

似たようなものを見つけましたか?