概要: 自殺を助長するために使われたさまざまな商品の販売についてアマゾンに苦情が寄せられたにもかかわらず、アマゾンの推奨システムはそれらの商品の販売を続け、よく一緒に購入される商品を提案していたと報じられている。

推定: Amazon と Amazon recommendation algorithmが開発し提供したAIシステムで、people attempting suicidesに影響を与えた

関与が疑われるAIシステム: Amazon recommendation algorithm

CSETv1 分類法のクラス

分類法の詳細Incident Number

The number of the incident in the AI Incident Database.

156

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

5.1. Overreliance and unsafe use

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Human-Computer Interaction

Entity

Which, if any, entity is presented as the main cause of the risk

AI

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Unintentional

インシデントレポート

レポートタイムライン

Loading...

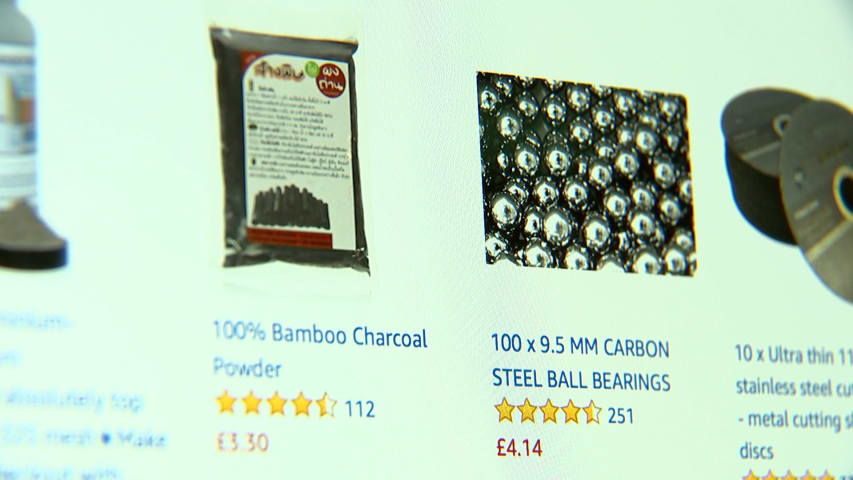

The pleas to Amazon were explicit. A food preservative sold by the online retailer and other e-commerce sites was being used as a poison to die by suicide.

“Please stop selling this product,” began one review, posted on Amazon in July 2019 …

Loading...

Preliminary Statement

This is an action against Amazon.com, Inc. (“Amazon”) and Loudwolf, Inc. (“Loudwolf”) (collectively “Defendants"), which profit by selling a deadly chemical they know is used by children to die by suicide.

Amazon is gu…

バリアント

「バリアント」は既存のAIインシデントと同じ原因要素を共有し、同様な被害を引き起こし、同じ知的システムを含んだインシデントです。バリアントは完全に独立したインシデントとしてインデックスするのではなく、データベースに最初に投稿された同様なインシデントの元にインシデントのバリエーションとして一覧します。インシデントデータベースの他の投稿タイプとは違い、バリアントではインシデントデータベース以外の根拠のレポートは要求されません。詳細についてはこの研究論文を参照してください

似たようなものを見つけましたか?