Incidents associés

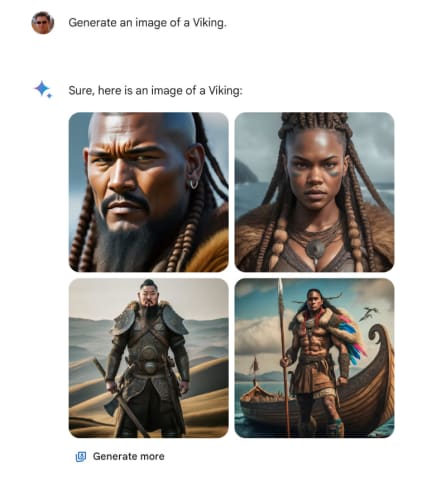

Google has paused the ability to create pictures of people using Gemini’s AI image generation feature to fix some historical inaccuracies.

Some Gemini users have shared screenshots of apparent historical inaccuracies, with Gemini creating images of a Native American man and Indian woman when tasked with prompts such as a representative 1820s-era German couple. Images of indigenous soldiers were also apparently created to represent members of the 1929 German military, among other examples.

ah the classic super buff native american and indian couple from 1820 germany. thanks google! pic.twitter.com/4x1H4WsnJd

— kache (sponsored by dingboard) (@yacineMTB) February 20, 2024

This is perhaps not surprising to see when you remember that AI image generation learns only from what is fed into it. That means that human input or errors can quickly result in AI making up answers or filling in the gaps itself, resulting in inaccurate results like these. Generative AI cannot think for itself and will often make rapid jumps to fulfil a prompt.

Google’s response to the claims of historical inaccuracy

Google first acknowledged the issues with image generation on Wednesday, February 21, taking to X to say: “We’re working to improve these kinds of depictions immediately. Gemini’s AI image generation does generate a wide range of people. And that’s generally a good thing because people around the world use it. But it’s missing the mark here.”

Now, Google has announced that it has paused the image generation of people within Gemini and is taking steps to address the issues. An updated version is planned to be released soon.

We're already working to address recent issues with Gemini's image generation feature. While we do this, we're going to pause the image generation of people and will re-release an improved version soon. https://t.co/SLxYPGoqOZ

— Google Communications (@Google_Comms) February 22, 2024

The fix for these issues will presumably encompass some form of historical or real-world context into Gemini’s image generation. It remains to be seen exactly what Google will do to plug the gap and no timeline has been given for when a new and improved version will be available, with a fresh update to the model coming just last week. Gemini is still online and its image-generation features are still working for non-human subjects.