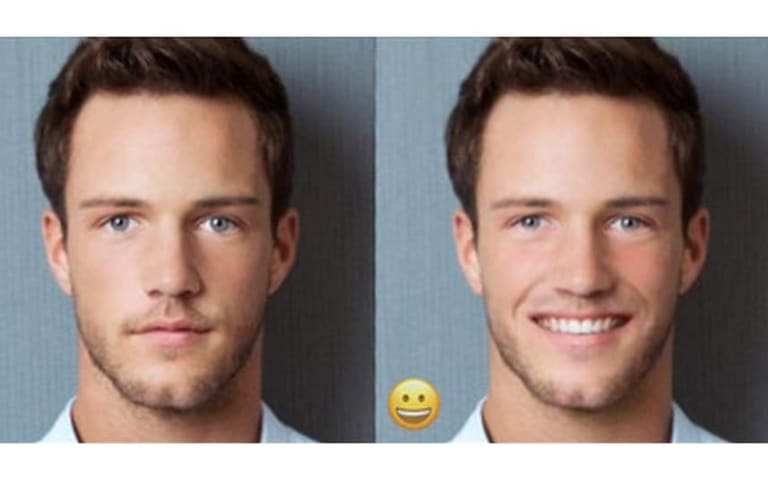

Descripción: Meta's AI image generator is alleged to produce inaccurate and biased images, consistently failing to depict interracial relationships involving Asian individuals and Caucasian or Black individuals. Instead, it generates images featuring two Asian people or stereotypes, erasing the diversity and representation of Asian people.

Herramientas

Nuevo InformeNueva RespuestaDescubrirVer Historial

El Monitor de Incidentes y Riesgos de IA de la OCDE (AIM) recopila y clasifica automáticamente incidentes y riesgos relacionados con la IA en tiempo real a partir de fuentes de noticias reputadas en todo el mundo.

Entidades

Ver todas las entidadesPresunto: un sistema de IA desarrollado e implementado por Meta, perjudicó a Asian People , Interracial couples y General public.

Estadísticas de incidentes

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

1.1. Unfair discrimination and misrepresentation

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Discrimination and Toxicity

Entity

Which, if any, entity is presented as the main cause of the risk

AI

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Unintentional

Informes del Incidente

Cronología de Informes

Loading...

Have you ever seen an Asian person with a white person, whether that’s a mixed-race couple or two friends of different races? Seems pretty common to me — I have lots of white friends!

To Meta’s AI-powered image generator, apparently this is…

Loading...

Respuesta post-incidente de Mia Sato

Yesterday, I reported that Meta's AI image generator was making everyone Asian, even when the text prompt specified another race. Today, I briefly had the opposite problem: I was unable to generate any Asian people using the same prompts as…

Variantes

Una "Variante" es un incidente de IA similar a un caso conocido—tiene los mismos causantes, daños y sistema de IA. En lugar de enumerarlo por separado, lo agrupamos bajo el primer incidente informado. A diferencia de otros incidentes, las variantes no necesitan haber sido informadas fuera de la AIID. Obtenga más información del trabajo de investigación.

¿Has visto algo similar?

Incidentes Similares

Did our AI mess up? Flag the unrelated incidents

Loading...

Biased Google Image Results

· 18 informes

Loading...

Gender Biases of Google Image Search

· 10 informes

Loading...

FaceApp Racial Filters

· 23 informes

Incidentes Similares

Did our AI mess up? Flag the unrelated incidents

Loading...

Biased Google Image Results

· 18 informes

Loading...

Gender Biases of Google Image Search

· 10 informes

Loading...

FaceApp Racial Filters

· 23 informes