Incidente 57: El sistema australiano de evaluación automatizada de deudas emitió avisos falsos a miles de personas

Herramientas

Entidades

Ver todas las entidadesClasificaciones de la Taxonomía CSETv0

Detalles de la TaxonomíaProblem Nature

Unknown/unclear

Physical System

Software only

Level of Autonomy

High

Nature of End User

Amateur

Public Sector Deployment

No

Data Inputs

welfare requests, tax records

Clasificaciones de la Taxonomía CSETv1

Detalles de la TaxonomíaIncident Number

57

AI Tangible Harm Level Notes

The system in question does not meet the CSET definition for AI. It is likely purely and automation, or a rules-based system without machine learning components.

Notes (special interest intangible harm)

Access to social welfare (a public service) was interfered with.

Special Interest Intangible Harm

yes

Risk Subdomain

7.3. Lack of capability or robustness

Risk Domain

- AI system safety, failures, and limitations

Entity

AI

Timing

Post-deployment

Intent

Unintentional

Informes del Incidente

Cronología de Informes

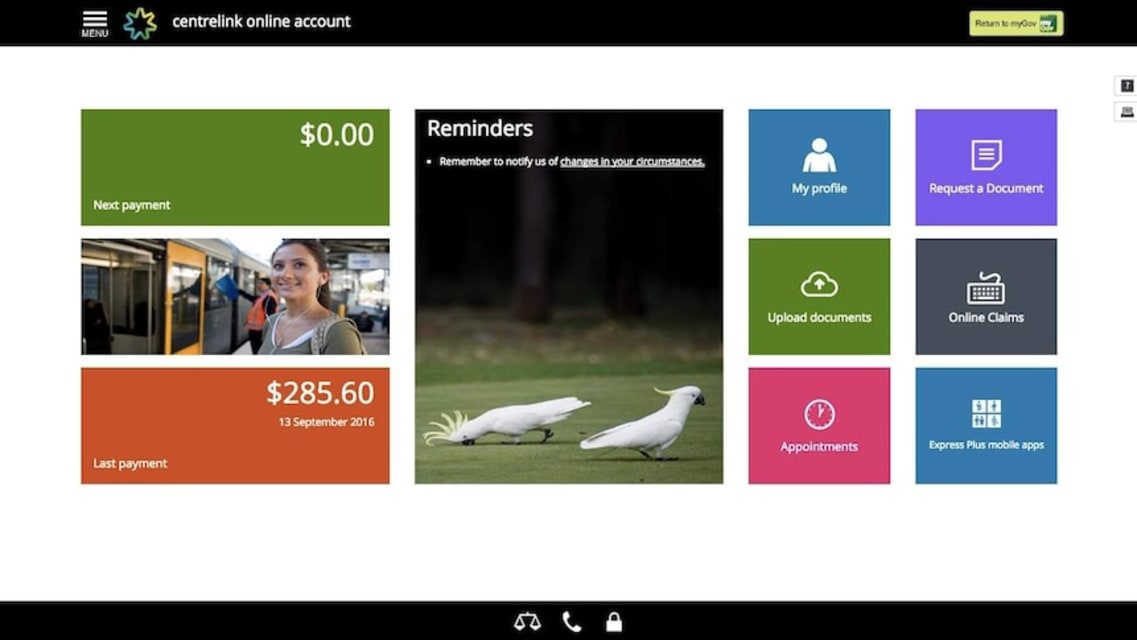

Los antiguos y actuales estudiantes dicen que Centrelink los acusa falsamente de ser estafadores de la asistencia social y los carga con miles de dólares de deuda.

Sianaye estudia a tiempo completo, trabaja en el comercio minorista a tiempo…

Los laboristas han pedido que se detenga de inmediato el sistema de recuperación de deudas hasta que se solucionen los problemas actuales. (AAP)

La crisis de la deuda de asistencia social de Centrelink que afecta al Departamento de Servicio…

Centrelink está amenazando a decenas de australianos con malas calificaciones crediticias y acciones legales si se niegan a inscribirse en planes de pago para pagar miles de dólares en deudas cuestionables.

'Si no establezco un esquema de p…

En el período previo a la Navidad, decenas de miles de australianos comenzaron a recibir avisos amenazantes de cobro de deudas de Centrelink y sus agentes. Viniendo de un gobierno que no pudo realizar un censo en línea, probablemente pueda …

Un letrero de la oficina de Medicare y Centrelink se ve en Bondi Junction el 21 de marzo de 2016 en Sydney, Australia. (Fuente: Getty)

El personal de Centrelink no podrá hacer frente a una "tormenta perfecta" esperada de consultas de los cl…

La investigadora médica Janet Hammill, que trabaja de forma voluntaria, lucha por comunicarse con alguien en Centrelink después de que le dijeron incorrectamente que debe $7,600

Este artículo tiene más de 2 años.

Este artículo tiene más de …

El programa de recuperación de deuda de Centrelink sufrirá cambios luego de las críticas públicas

Actualizado

El gobierno federal introducirá cambios en el controvertido programa de recuperación de deuda de Centrelink, a pesar de insistir e…

El Gobierno Federal ampliará el programa automático de recuperación de deudas de Centrelink a finales de este año para centrarse en los pagos de apoyo por discapacidad y los jubilados de edad avanzada.

[Los gráficos de la Oficina de Presupu…

El Gobierno utiliza cifras falsas para defender su sistema de robodeuda

Aunque el Gobierno afirma que ha reclamado $300 millones en deudas contraídas por beneficiarios de asistencia social, se niega a revelar exactamente cuánto dinero ha re…

Este artículo tiene más de 2 años.

Este artículo tiene más de 2 años.

El personal del Departamento de Servicios Humanos se declarará en huelga por los salarios, los recortes presupuestarios y el escándalo de la "deuda robótica" de Centrelin…

Todos estamos hablando de la controversia de la deuda de Centrelink, pero ¿qué es 'robodebt' de todos modos?

Al corriente

Ha estado en los titulares desde el verano, cuando miles de australianos descubrieron repentinamente que le debían din…

El director del Departamento de Servicios Humanos ha culpado en gran medida del escándalo de la “deuda robótica” a la falta de compromiso de los beneficiarios de asistencia social con Centrelink.

La investigación del Senado sobre el sistema…

Centrelink: la oficina de impuestos dice que no se le puede culpar por las fallas del sistema automatizado de recuperación de deudas

Actualizado

La Oficina de Impuestos de Australia (ATO, por sus siglas en inglés) ha tratado de distanciarse…

Centrelink no se comunicó adecuadamente con los beneficiarios de la asistencia social, su personal carecía de capacitación y su llamado programa 'robo-deuda' generó expectativas "irrazonables" en los reclamantes y debería haber sido evaluad…

Este artículo tiene más de 2 años.

Este artículo tiene más de 2 años.

El informe de un ombudsman sobre la implementación del servicio automatizado de recuperación de deudas de Centrelink ha identificado múltiples fallas que imponían cargas …

El sistema de recuperación de deudas de Centrelink carece de transparencia y trató injustamente a algunos clientes, según el ombudsman

Actualizado

El controvertido sistema de recuperación de deudas de Centrelink carece de transparencia y ha…

La evaluación mordaz se produce cuando el sistema se vuelve a poner bajo el microscopio, esta vez por auditores externos PwC Australia

Este artículo tiene más de 2 años.

Este artículo tiene más de 2 años.

Victoria Legal Aid ha descrito el s…

Australian Privacy Foundation le dice a la investigación del Senado sobre el escándalo de la 'deuda robótica' que no hay evidencia de que se hayan seguido las pautas

Este artículo tiene más de 1 año.

Este artículo tiene más de 1 año.

Los de…

Más de un tercio de los casos de recuperación de deudas de asistencia social de Centrelink que se apelan ante el tribunal independiente son anulados.

El Tribunal Administrativo de Apelaciones anuló 960 decisiones de deuda de Centrelink de 2…

El informe de investigación del Senado recomienda que todas las deudas calculadas bajo un modelo defectuoso sean reevaluadas y el sistema sea rediseñado

Este artículo tiene más de 1 año.

Este artículo tiene más de 1 año.

Una investigación d…

La expansión del programa de comparación de datos tiene como objetivo examinar si las ganancias de las personas de los fideicomisos o las guarderías familiares las hacen inelegibles para recibir asistencia social, dice el gobierno.

Este art…

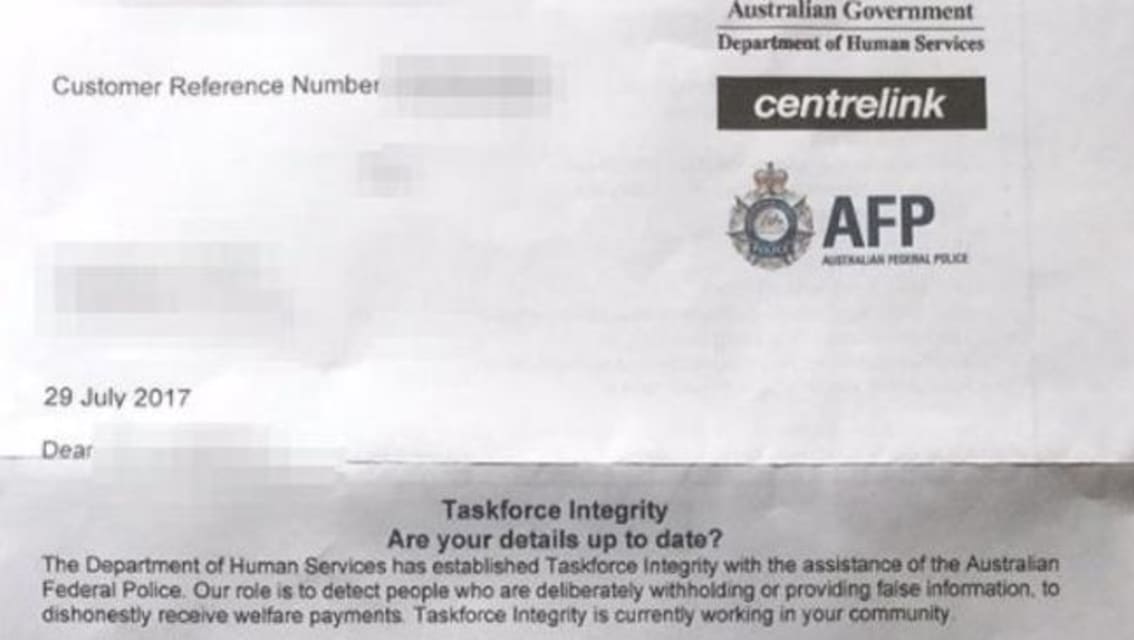

Las cartas de recuperación de deuda "amenazantes" de CENTRELINK han alcanzado el siguiente nivel y los australianos están aterrorizados, según un locutor de radio que confrontó airadamente al gobierno.

Las notas enviadas a personas en ubica…

El gobierno de Turnbull admitió que emitió avisos de recuperación de deudas automáticas a 20,000 beneficiarios de asistencia social que luego se descubrió que debían menos o incluso nada.

Los documentos presentados al Parlamento por el Mini…

Los datos muestran que 7.456 deudas se redujeron a cero y otras 12.524 se redujeron parcialmente entre julio del año pasado y marzo

Este artículo tiene más de 1 año.

Este artículo tiene más de 1 año.

Al menos 20.000 deudas de Centrelink se …

Este artículo tiene más de 1 año.

Este artículo tiene más de 1 año.

El sindicato del sector público ha condenado los movimientos para privatizar el muy criticado centro de llamadas de Centrelink, diciendo que le daría a Serco acceso a grand…

Un Comité de Referencias de Asuntos Comunitarios publicó un informe en junio sobre el controvertido programa de robo de deuda de Centrelink, haciendo 21 recomendaciones para arreglar el programa que se implementó a mediados de 2016.

El info…

El departamento dice que el aumento en el uso de contratistas privados es temporal, pero el sindicato dice que el gobierno está "privatizando nuestra red de seguridad"

Centrelink utilizará 1.000 trabajadores contratados para ayudar a recupe…

La trabajadora minorista Laura ha tenido una deuda de $24,000 sobre su cabeza desde octubre del año pasado.

Laura, quien le pidió a Hack que no usara su apellido, trabaja de manera informal y recibió pagos de apoyo a los ingresos a través d…

El programa de robo de deuda del gobierno de Turnbull implica la ejecución de deudas "ilegales" que en algunos casos están infladas o no existen, dijo un exmiembro del Tribunal Administrativo de Apelaciones.

La acusación mordaz del programa…

En un intento por recuperar $ 800 millones de la deuda, los antiguos beneficiarios de asistencia social que deben dinero al gobierno federal enfrentarán una prohibición de viajar hasta que se paguen los fondos.

Hasta ahora se han emitido 20…

El gobierno federal resolvió un desafío histórico contra su programa de robodeuda: conceder una deuda de $ 2,500 contra Deanna Amato no era legal porque se basó en el promedio de ingresos.

En órdenes emitidas por consentimiento el miércoles…

El problema con la inteligencia artificial es que es artificial.

El problema con la inteligencia de los humanos es que es limitada, variable y comprometida por el juicio y los valores.

Ponga ambos juntos y obtendrá una buena comprensión de …

El Gobierno Federal reembolsará $ 721 millones de deudas que recuperó a través de su controvertido esquema Robodebt.

El esquema hizo que cientos de miles de personas emitieran avisos de deuda generados por computadora, algunos de los cuales…

El gobierno federal finalmente acordó pagar a cientos de miles de personas que se vieron afectadas por deudas Centrelink ilegales e incorrectas durante un período de cuatro años a través del El fallido plan de robodeuda de Coalition.

Años d…

El gobierno australiano ha reconocido en privado que el escándalo de la robodeuda puede ir más allá de las 470.000 deudas ilegales ya identificadas para reembolso, pero no tiene planes de devolver el dinero porque cree que sería demasiado d…

Nathan Kearney dice que perdió dos años y medio de su vida debido a la robodeuda. Todavía está viendo a un consejero al respecto.

Hace cuatro años vivía en Brisbane, veía y tocaba en conciertos, trabajaba en varios trabajos ocasionales dife…

Un "capítulo vergonzoso" en la administración pública ha llevado a la corte federal a aprobar un acuerdo por valor de $ 1.800 millones entre el estado libre asociado y las víctimas del esquema de robodeuda de la Coalición.

En el fallo del v…

Robodebt: el gobierno lucha por mantener en secreto documentos que pueden mostrar 'lo que salió mal'

El gobierno de Morrison está luchando para mantener en secreto los documentos que, según un ex servidor público, podrían mostrar "lo que sa…

La madre de una víctima de robodeuda que se quitó la vida le dijo a una comisión real que el anterior gobierno de coalición la bloqueó mientras buscaba respuestas sobre las deudas de asistencia social de su hijo durante más de cinco años.

L…

Variantes

Incidentes Similares

Did our AI mess up? Flag the unrelated incidents

Incidentes Similares

Did our AI mess up? Flag the unrelated incidents