Incidente 1341: Anuncio falso falso que representa falsamente el respaldo de un médico, utilizado para vender crema para el lipedema a la paciente estadounidense Beth Holland.

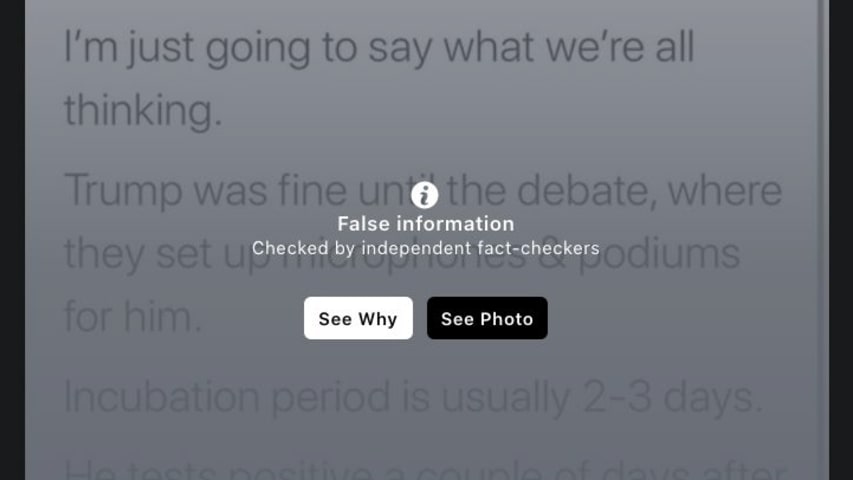

Descripción: Una paciente estadounidense, Beth Holland, denunció pérdidas económicas tras adquirir Svelta Venastra, un supuesto tratamiento para el lipedema, promocionado mediante un anuncio en línea que supuestamente utilizaba un vídeo deepfake para mostrar falsamente recomendaciones de profesionales médicos y figuras públicas, incluido su propio médico, el Dr. David Amron. El anuncio, que circuló en Facebook, tergiversó la eficacia del producto, lo que llevó a Holland a gastar aproximadamente 300 dólares en una crema que no alivió su afección.

Entidades

Ver todas las entidadesAlleged: Unknown deepfake technology developers y Unknown voice cloning technology developers developed an AI system deployed by Unknown scammers, which harmed Beth Holland , David Amron , Oprah Winfrey , Kelly Clarkson y Carnie Wilson.

Sistemas de IA presuntamente implicados: Unknown deepfake technology , Unknown voice cloning technology , Facebook y Social media platforms

Estadísticas de incidentes

ID

1341

Cantidad de informes

1

Fecha del Incidente

2025-12-03

Editores

Daniel Atherton

Informes del Incidente

Cronología de Informes

Loading...

Los anuncios online de nuevos tratamientos médicos son más frecuentes que nunca. Están por todas partes en redes sociales, promocionando promesas prometedoras de nuevos productos y dispositivos.

Estos incluyen curas milagrosas y soluciones …

Variantes

Una "Variante" es un incidente de IA similar a un caso conocido—tiene los mismos causantes, daños y sistema de IA. En lugar de enumerarlo por separado, lo agrupamos bajo el primer incidente informado. A diferencia de otros incidentes, las variantes no necesitan haber sido informadas fuera de la AIID. Obtenga más información del trabajo de investigación.

¿Has visto algo similar?

Incidentes Similares

Did our AI mess up? Flag the unrelated incidents

Incidentes Similares

Did our AI mess up? Flag the unrelated incidents