Don’t blame the algorithm — as long as there are racial disparities in the justice system, sentencing software can never be entirely fair.

For generations, the Maasai people of eastern Africa have passed down the story of a tireless old man. He lived alone and his life was not easy. He spent every day in the fields — tilling the land, tending the animals, and gathering water. The work was as necessary as it was exhausting. But the old man considered himself fortunate. He had a good life, and never really gave much thought to what was missing. One morning the old man was greeted with a pleasant surprise. Standing in his kitchen was a young boy, perhaps seven or eight years old. The old man had never seen him before. The boy smiled but said nothing. The old man looked around. His morning breakfast had already been prepared, just as he liked it. He asked the boy’s name. “Kileken,” the boy replied. After some prodding, the boy explained that, before preparing breakfast, he had completed all of the old man’s work for the day. Incredulous, the old man stepped outside. Indeed, the fields had been tilled, the animals tended, and the water gathered. Astonishment written all over his face, the old man staggered back into the kitchen. “How did this happen? And how can I repay you?” The boy smiled again, this time dismissively. “I will accept no payment. All I ask is that you let me stay with you.” The old man knew better than to look a gift horse in the mouth. Kileken and the old man soon became inseparable, and the farm grew lush and bountiful as it never had before. The old man could hardly remember what life was like before the arrival of his young comrade. There could be no doubt: With Kileken’s mysterious assistance, the old man was prospering. But he never quite understood why, or how, it had happened.

To an extent we have failed to fully acknowledge, decision-making algorithms have become our society’s collective Kileken. They show up unannounced and where we least expect them, promise and often deliver great things, and quickly come to be seen as indispensable. Their reach can’t be overestimated. They tell traders what stocks to buy and sell, determine how much our car insurance costs, influence which products Amazon shows us and how much we get charged for them, and interpret our Google searches and rank their results.

These algorithms improve the efficiency and accuracy of services we all rely on, create new products we never before could have imagined, relieve people of tedious work, and are an engine of seemingly unbounded economic growth. They also permeate areas of social decision-making that have traditionally been left to direct human judgment, like romantic matchmaking and criminal sentencing. Yet they are largely hidden from view, remain opaque even when we are prompted to examine them, and are rarely subject to the same checks and balances as human decision-makers.

Worse yet, some of these algorithms seem to reflect back to us society’s ugliest prejudices. Last April, for instance, our Facebook feeds — curated by a labyrinth of algorithms — were inundated with stories about FaceApp, a program that applied filters to uploaded photographs so that the user would appear younger or older or more attractive. At first, this app seemed to be just another clever pitch to the Snapchat generation. But things quickly went sideways when users discovered that the app’s “hot” filter — which purported to transform regular Joes and Jills into beautiful Adonises and Aphrodites — made skin lighter, eyes rounder, and noses smaller. The app appeared to be equating physical attractiveness with European facial features. The backlash was swift, ruthless, and seemingly well-deserved. The app — and, it followed, the algorithm it depended on — appeared to be racist. The company first renamed the “hot” filter to “exclude any positive connotation associated with it,” before unceremoniously pulling it from the app altogether.

FaceApp’s hot filter was far from the first algorithm to be accused of racism, and certainly won’t be the last. Google’s autocomplete feature — which relies on an algorithm that scans other users’ previous searches to try to guess your query — is regularly chastised for shining a spotlight on racist, sexist, and other regressive sentiments that would otherwise remain tucked away in the darkest corners of the Internet and our psyches.

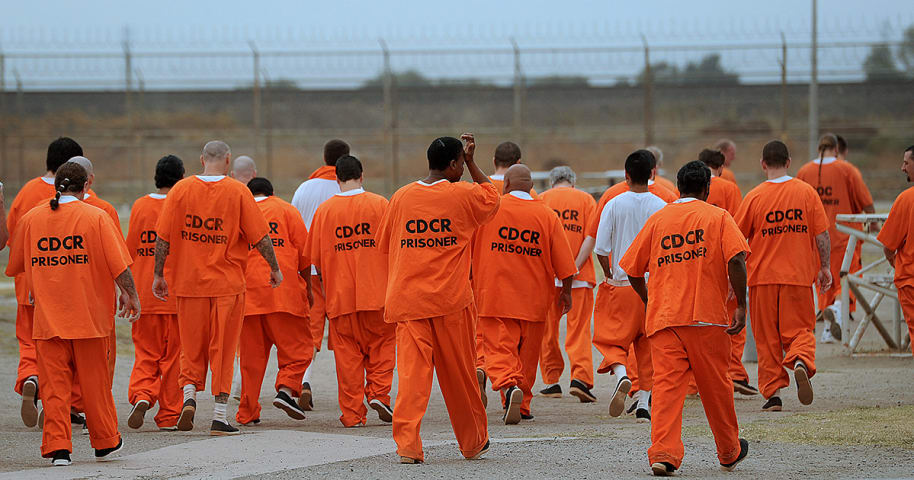

But while rogue apps or discomfiting autocomplete suggestions are both ephemeral and potentially responsive to public outcry, the same can hardly be said about the insidious encroachment of decision-making algorithms into the workings of our legal system, where they frequently play a critical role in determining the fates of defendants — and, like FaceApp, often exhibit a preference for white subjects. But the problem is more than skin deep. The issue is that we cannot escape the long arm of America’s h