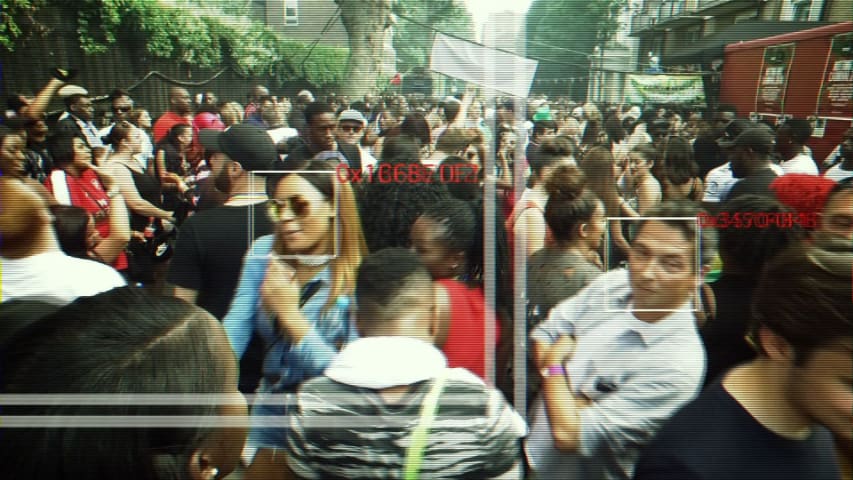

The controversial trial of facial recognition equipment at Notting Hill Carnival resulted in roughly 35 false matches and an 'erroneous arrest', highlighting questions about police use of the technology.

The system only produced a single accurate match during the course of Carnival, but the individual had already been processed by the justice system between the time police compiled the suspect database and deployed it.

In the days before Carnival, Sky News revealed that police have more than 20 million facial recognition images on the British public, including hundreds of thousands on innocent people.

There are a number of legal questions surrounding the police's databases, especially following a High Court ruling in 2012 which said that the retention of those images was unlawful.

Silkie Carlo, the technology policy officer for human rights group Liberty, observed the trial and described it as a "worryingly inaccurate and painfully crude facial recognition operation".

Ms Carlo explained that the 'erroneously arrested' individual was flagged up as being wanted on warrant for a rioting offence.

However, betweem the construction of the suspect database and Carnival, the individual had already been arrested and was no longer wanted.

"The project leads viewed this as a resounding success - not a failure," wrote Ms Carlo.

Independent sources have confirmed details in a blog post by Ms Carlo noting that five members of the public were flagged as suspects by the system and approached by officers to prove their identities.

The individuals had identification documents on them, but had they not they could potentially have been wrongfully arrested.

A spokesperson for the Metropolitan Police Service explained to Sky News that the "erroneously arrested" individual had not been arrested, which it defined as meaning they were taken in custody to a police station and questioned.

They said that the individual was wanted on suspicion of a public order offence and "would have been stopped by officers explaining why they were being stopped" before a radio check against the Police National Computer established that they had already been dealt with.

The Met said: "We have always maintained that it was a continued trial to test the technology and assess if it could assist police in identifying known offenders in large events, in order to protect the wider public."

"A full analysis of its deployments and a wider consultation will take place at the conclusion of the trial," the spokesperson added, although no date was given for this.

Sky sources have suggested that the Met is planning to trial the technology at other events in the future.