インシデントのステータス

Risk Subdomain

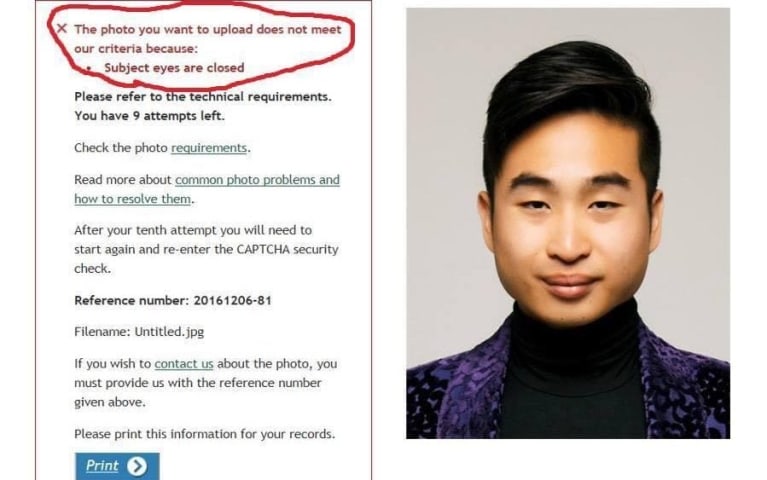

7.3. Lack of capability or robustness

Risk Domain

- AI system safety, failures, and limitations

Entity

AI

Timing

Post-deployment

Intent

Unintentional

インシデントレポート

レポートタイムライン

Tokyo & London, July 11, 2017 - NEC Corporation (NEC; TSE: 6701) today announced that it has provided a facial recognition system for South Wales Police in the UK through NEC Europe Ltd. The system utilizes NeoFace® Watch, NEC's flagship fa…

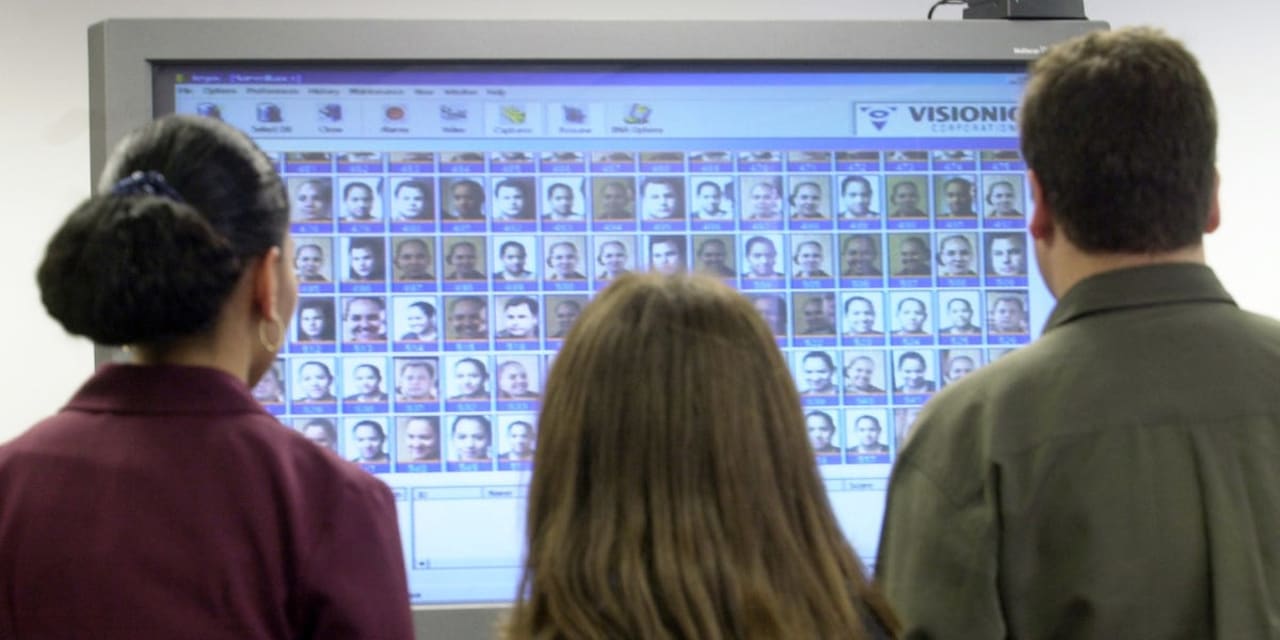

More than 2,000 people were wrongly identified as possible criminals by facial scanning technology at the 2017 Champions League final in Cardiff.

South Wales Police used the technology as about 170,000 people were in Cardiff for the Real Ma…

Facial recognition software wrongly identified more than 2,000 people as potential criminals as police patrolled the Champions League final in Cardiff.

The technology provided hundreds of “false positives” wrongly marking out innocent peopl…

A British police agency is defending (this link is inoperable for the moment) its use of facial recognition technology at the June 2017 Champions League soccer final in Cardiff, Wales—among several other instances—saying that despite the sy…

-

Police in South Wales have been relying on facial recognition technology for 12 months.

-

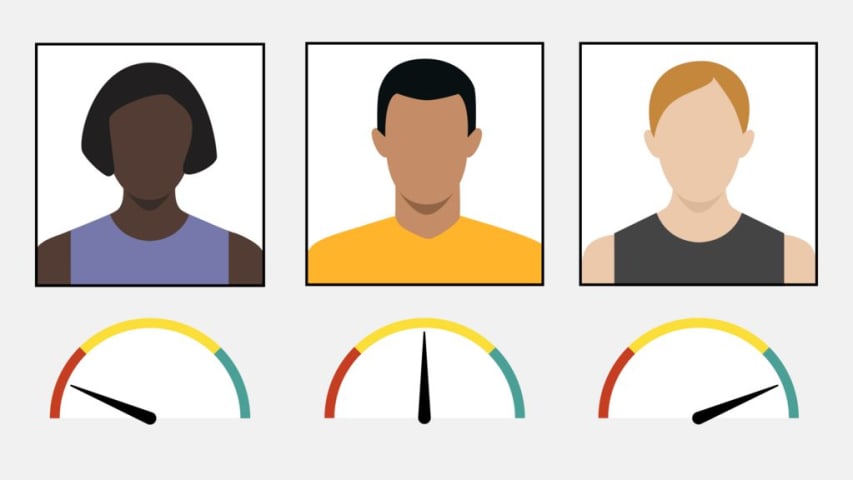

An FOI request has revealed that the technology provides a "false positive" ID in more than 90% of cases.

-

The police have admitted that "of course…

Sir Terence Etherton MR, Dame Victoria Sharp PQBD and Lord Justice Singh:

This appeal concerns the lawfulness of the use of live automated facial recognition technology (“AFR”) by the South Wales Police Force (“SWP”) in an ongoing trial usi…

On August 11, 2020, the Court of Appeal of England and Wales overturned the High Court’s dismissal of a challenge to South Wales Police’s use of Automated Facial Recognition technology (“AFR”), finding that its use was unlawful and violated…

Use of live facial recognition technology by UK police fails to meet “minimum ethical and legal standards” and should be banned from application in public spaces, say researchers from the University of Cambridge.

A team of researchers at th…